Before you can query your data with an LLM, you need to ingest it. LlamaIndex: Data Indexing and Query Engines provides a

rich ecosystem of data connectors that load documents from files, APIs, databases,

and SaaS platforms into a unified Document abstraction. This section covers the core

loading primitives, the document and node data model, metadata extraction, and the LlamaHub

connector ecosystem.

O.1.1 The Ingestion Pipeline at a Glance

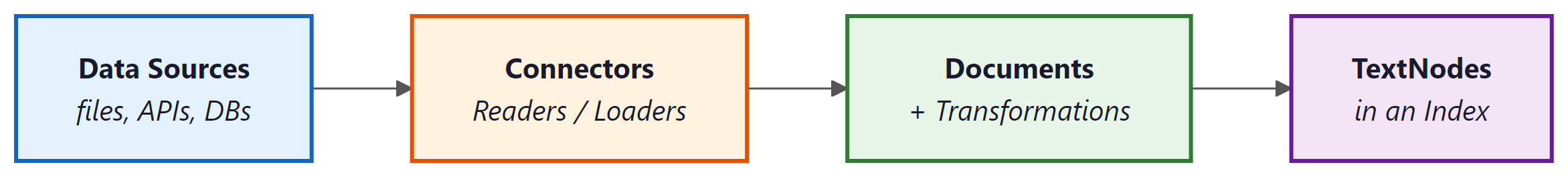

Every RAG (Retrieval-Augmented Generation) application begins with a simple question: how do I get

my data into a format the LLM can work with? LlamaIndex: Data Indexing and Query Engines answers this with a three-stage ingestion

pipeline. First, connectors load raw content into Document objects.

Second, transformations (chunking, metadata extraction, embedding) convert documents

into TextNode objects. Third, nodes are inserted into an index for

retrieval. This section focuses on the first two stages.

O.1.2 SimpleDirectoryReader

The most common starting point is SimpleDirectoryReader, which recursively loads files

from a local directory. It auto-detects file types (PDF, DOCX, TXT, CSV, Markdown, images, and more)

and delegates to the appropriate parser. For most prototypes and small-to-medium corpora, this single

class is all you need.

from llama_index.core import SimpleDirectoryReader

# Load all supported files from a directory

documents = SimpleDirectoryReader(

input_dir="./data/company_docs",

recursive=True, # traverse subdirectories

required_exts=[".pdf", ".md", ".txt"], # optional filter

).load_data()

print(f"Loaded {len(documents)} documents")

print(f"First doc preview: {documents[0].text[:200]}")

print(f"Metadata: {documents[0].metadata}")

Each loaded file becomes one or more Document objects. The reader automatically

populates metadata fields such as file_name, file_path,

file_type, and creation_date. You can also supply your own metadata

via the file_metadata callback parameter.

from llama_index.core import SimpleDirectoryReader

def custom_metadata(file_path: str) -> dict:

"""Attach department labels based on folder structure."""

if "engineering" in file_path:

return {"department": "engineering", "access_level": "internal"}

elif "legal" in file_path:

return {"department": "legal", "access_level": "restricted"}

return {"department": "general", "access_level": "public"}

documents = SimpleDirectoryReader(

input_dir="./data",

file_metadata=custom_metadata,

).load_data()

# Verify custom metadata was attached

for doc in documents[:3]:

print(doc.metadata["department"], doc.metadata["file_name"])

SimpleDirectoryReader also accepts an input_files parameter if you

want to load a specific list of files rather than an entire directory. This is handy when you

need fine-grained control over which documents enter your pipeline.

O.1.3 LlamaParse: Structured Document Parsing

PDFs with tables, charts, and complex layouts are notoriously difficult to parse. LlamaParse is LlamaIndex: Data Indexing and Query Engines's cloud-based document parser that uses vision models to extract structured content from PDFs, PowerPoint files, and scanned documents. It returns clean Markdown with tables preserved, making it ideal for financial reports, academic papers, and technical manuals.

from llama_parse import LlamaParse

from llama_index.core import SimpleDirectoryReader

# Configure LlamaParse as the PDF parser

parser = LlamaParse(

api_key="llx-...", # or set LLAMA_CLOUD_API_KEY env var

result_type="markdown", # "markdown" or "text"

num_workers=4, # parallel parsing

verbose=True,

)

# Use it as a file extractor within SimpleDirectoryReader

file_extractor = {".pdf": parser}

documents = SimpleDirectoryReader(

input_dir="./data/financial_reports",

file_extractor=file_extractor,

).load_data()

# Tables and structure are preserved as Markdown

print(documents[0].text[:500])

LlamaParse is a cloud service that sends your documents to LlamaIndex: Data Indexing and Query Engines's API for processing. For sensitive or regulated data, verify that this complies with your organization's data governance policies. You receive a free tier of 1,000 pages per day; beyond that, usage is metered.

O.1.4 LlamaHub Connectors

Real-world data lives in many places beyond the local filesystem. The LlamaHub

registry provides hundreds of community-built connectors (called "readers") for SaaS platforms,

databases, and APIs. Each connector implements the same load_data() interface, so

switching data sources requires changing only the reader class.

The following example demonstrates loading data from a Notion workspace and a PostgreSQL database.

from llama_index.readers.notion import NotionPageReader

from llama_index.readers.database import DatabaseReader

# --- Notion ---

notion_reader = NotionPageReader(integration_token="secret_...")

notion_docs = notion_reader.load_data(

page_ids=["abc123", "def456"]

)

# --- PostgreSQL ---

db_reader = DatabaseReader(

uri="postgresql://user:pass@localhost:5432/mydb"

)

db_docs = db_reader.load_data(

query="SELECT title, content, created_at FROM articles WHERE published = true"

)

# Combine sources into a single corpus

all_documents = notion_docs + db_docs

print(f"Total documents: {len(all_documents)}")

Other popular LlamaHub connectors include readers for Slack, Google Drive, Confluence, GitHub

repositories, Arxiv papers, Wikipedia, and S3 buckets. You can install them individually

(e.g., pip install llama-index-readers-notion) or browse the full registry at

llamahub.ai.

O.1.5 The Document and TextNode Data Model

Understanding the internal data model is essential for customizing your pipeline. LlamaIndex: Data Indexing and Query Engines

represents all ingested content as a hierarchy of schema objects. At the top

level, a Document holds the full content of a loaded source. During indexing,

documents are split into smaller TextNode objects (chunks). Both classes inherit

from BaseNode and share a common interface.

from llama_index.core.schema import Document, TextNode

# Create a Document manually

doc = Document(

text="LlamaIndex: Data Indexing and Query Engines is a data framework for LLM applications.",

metadata={"source": "manual", "category": "overview"},

doc_id="doc-001",

)

# Create TextNodes (chunks) manually

node1 = TextNode(

text="LlamaIndex: Data Indexing and Query Engines is a data framework",

metadata={"source": "manual", "chunk_index": 0},

)

node2 = TextNode(

text="for LLM applications.",

metadata={"source": "manual", "chunk_index": 1},

)

# Establish parent-child relationships

node1.relationships = {

"parent": doc.as_related_node_info(),

"next": node2.as_related_node_info(),

}

node2.relationships = {

"parent": doc.as_related_node_info(),

"previous": node1.as_related_node_info(),

}

print(f"Document ID: {doc.doc_id}")

print(f"Node 1 ID: {node1.node_id}")

print(f"Node 1 parent: {node1.relationships['parent'].node_id}")

Every node has a unique node_id (auto-generated UUID) and can carry arbitrary

metadata as a dictionary. Metadata is propagated to the vector store during indexing,

enabling metadata filtering at query time (covered in Section O.4).

O.1.6 Metadata Extraction and Transformations

Raw documents often lack the metadata that makes retrieval precise. LlamaIndex: Data Indexing and Query Engines provides

metadata extractors that use an LLM to automatically generate titles, summaries,

keywords, and question-answer pairs for each node. These extractors plug into the

IngestionPipeline, which chains together transformations in sequence.

from llama_index.core.ingestion import IngestionPipeline

from llama_index.core.node_parser import SentenceSplitter

from llama_index.core.extractors import (

TitleExtractor,

SummaryExtractor,

QuestionsAnsweredExtractor,

)

from llama_index.core import SimpleDirectoryReader

# Load raw documents

documents = SimpleDirectoryReader("./data").load_data()

# Build a transformation pipeline

pipeline = IngestionPipeline(

transformations=[

SentenceSplitter(chunk_size=512, chunk_overlap=64),

TitleExtractor(nodes=3), # infer title from first N nodes

SummaryExtractor(summaries=["self"]), # one-sentence summary

QuestionsAnsweredExtractor(questions=3), # 3 questions per node

]

)

# Run the pipeline

nodes = pipeline.run(documents=documents)

# Inspect enriched metadata

sample = nodes[0]

print("Title:", sample.metadata.get("document_title"))

print("Summary:", sample.metadata.get("section_summary"))

print("Questions:", sample.metadata.get("questions_this_excerpt_can_answer"))

The IngestionPipeline also supports caching via a docstore parameter.

When you re-run the pipeline on an updated corpus, only new or changed documents are processed,

saving both time and LLM API costs.

For production systems, always attach at least a file_name and

source_url to each document's metadata. This enables your application to show

citations and source links in responses, which is critical for user trust and auditability.

Choosing a Chunking Strategy

The SentenceSplitter used above is the default node parser. It splits text at

sentence boundaries and respects a configurable chunk_size (in tokens) and

chunk_overlap. LlamaIndex: Data Indexing and Query Engines also provides alternative splitters for code

(CodeSplitter), Markdown (MarkdownNodeParser), and semantic

boundaries (SemanticSplitterNodeParser, which uses embedding similarity to find

natural break points). The choice of splitter has a significant impact on retrieval quality;

we revisit this topic in Section O.4 when discussing advanced retrieval strategies.

Build a multi-source ingestion pipeline. Load documents from at least two

different sources (e.g., a local PDF directory and a Wikipedia reader). Attach custom metadata

that identifies the source type. Run the documents through an IngestionPipeline

with a SentenceSplitter and one metadata extractor. Verify that the resulting

nodes contain both your custom metadata and the LLM-generated metadata.