The fastest API call is the one you never make. The most private data is the data that never leaves the device.

Every engineer who has waited for a cloud inference round trip on a spotty connection

Not every LLM inference should travel to the cloud. Privacy constraints, unreliable connectivity, latency requirements, and per-query API costs at scale all create demand for on-device inference. This section surveys the leading edge deployment frameworks (llama.cpp, Ollama, MLX, ExecuTorch), the quantization techniques that make large models fit on consumer hardware, and the battery and thermal constraints that shape mobile inference strategies. The inference optimization techniques from Chapter 9 provide the theoretical foundation, while this section focuses on the practical tools and tradeoffs for deploying models at the edge.

Prerequisites

This section builds on inference optimization from Chapter 9, deployment architecture from Section 31.1, and quantization fundamentals from Chapter 16.

1. Why Edge Deployment Matters

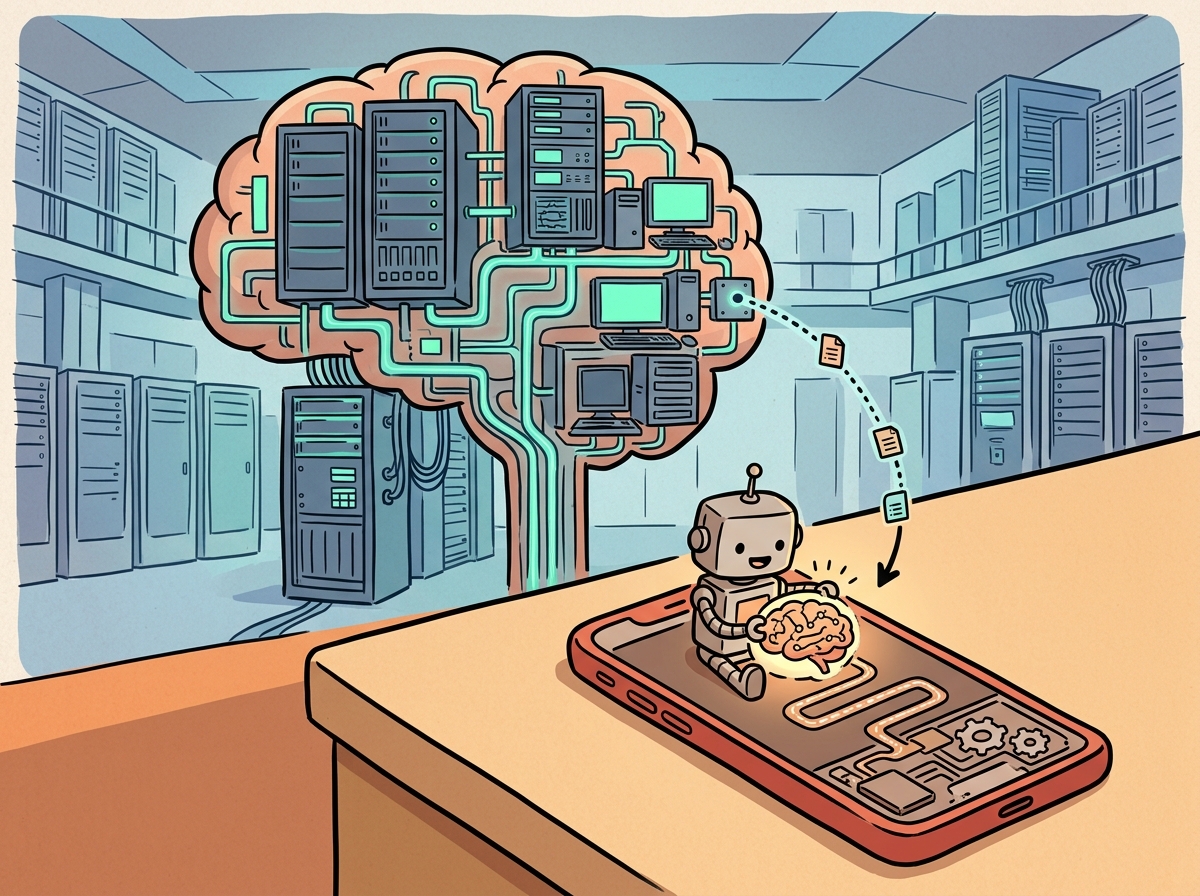

Not every LLM inference should travel to the cloud. When a physician uses an AI assistant in a hospital without reliable internet, when a mobile app needs sub-100ms autocomplete without per-query API costs, or when a defense contractor cannot send data to a third-party server, on-device inference is not a nice-to-have; it is a requirement. Edge deployment moves the model to the user's hardware, eliminating network latency, removing per-query API costs, enabling offline operation, and keeping sensitive data on the device.

The economics are compelling at scale. An application serving 10 million daily queries at $0.002 per query spends $20,000 per day on API costs. If a quantized 3B-parameter model running on the user's device can handle 80% of those queries with acceptable quality, the savings are substantial. The trade-off is clear: smaller models with lower quality versus larger cloud models with higher quality, and the art of edge deployment is finding the right balance for your use case.

Edge deployment is not about replacing cloud models. The most effective production architectures use a tiered approach: a small on-device model handles simple queries (autocomplete, classification, formatting) with zero latency and zero cost, while complex queries (multi-step reasoning, long-context synthesis) are routed to cloud models. The on-device model also serves as a fallback when the network is unavailable, providing degraded but functional service rather than a blank screen.

Apple's on-device language model for iOS 18 runs a 3B-parameter model that fits in 1.5 GB of memory after quantization. It handles autocomplete, message summarization, and notification prioritization without sending a single token to the cloud. Your phone is now running a language model that would have been considered state-of-the-art in 2021, and it does so while you are checking your email in airplane mode.

Use Case Matrix

| Use Case | Primary Driver | Typical Model Size | Target Hardware |

|---|---|---|---|

| Medical records assistant | Privacy (HIPAA) | 3B to 8B | Workstation GPU |

| Mobile keyboard autocomplete | Latency, cost | 0.5B to 1B | Phone NPU/GPU |

| Offline field assistant | No connectivity | 1B to 3B | Laptop CPU |

| Smart home device | Latency, privacy | 0.1B to 0.5B | ARM SoC |

| Enterprise document processing | Data sovereignty | 8B to 70B | On-premise GPU cluster |

2. llama.cpp: Universal C/C++ Inference

llama.cpp, created by Georgi Gerganov, is the foundational project for running LLMs on consumer hardware. Written in pure C/C++ with no Python dependencies, it compiles and runs on virtually any platform: Linux, macOS, Windows, Android, iOS, and even Raspberry Pi. The project introduced the GGUF (GPT-Generated Unified Format) quantization format, which has become the standard for distributing quantized models. llama.cpp supports dozens of model architectures (Llama, Mistral, Phi, Qwen, Gemma, and many others) and provides both a CLI interface and a built-in HTTP server compatible with the OpenAI API format.

# Build llama.cpp from source

git clone https://github.com/ggerganov/llama.cpp

cd llama.cpp

cmake -B build -DGGML_CUDA=ON # For NVIDIA GPUs; omit for CPU-only

cmake --build build --config Release -j $(nproc)

# Download a GGUF model (example: Llama 3.2 3B at Q4_K_M quantization)

# Models are available on Hugging Face in GGUF format

wget https://huggingface.co/bartowski/Llama-3.2-3B-Instruct-GGUF/resolve/main/Llama-3.2-3B-Instruct-Q4_K_M.gguf

# Run interactive chat

./build/bin/llama-cli \

-m Llama-3.2-3B-Instruct-Q4_K_M.gguf \

--chat-template llama3 \

-c 4096 \

-ngl 99 # Offload all layers to GPU

# Start an OpenAI-compatible API server

./build/bin/llama-server \

-m Llama-3.2-3B-Instruct-Q4_K_M.gguf \

--host 0.0.0.0 --port 8080 \

-c 4096 -ngl 99

GGUF Quantization Levels

GGUF models come in various quantization levels that trade quality for memory and speed. The naming convention encodes the bit width and quantization method. Understanding these trade-offs is essential for choosing the right model variant for your hardware constraints.

| Quantization | Bits per Weight | Size (7B model) | Quality Impact | Best For |

|---|---|---|---|---|

| Q8_0 | 8.0 | ~7.2 GB | Near-lossless | Maximum quality, ample RAM |

| Q6_K | 6.6 | ~5.5 GB | Very small loss | High quality, moderate RAM |

| Q5_K_M | 5.7 | ~4.8 GB | Small loss | Good balance for most uses |

| Q4_K_M | 4.8 | ~4.1 GB | Moderate loss | Most popular; fits 8GB VRAM |

| Q3_K_M | 3.9 | ~3.3 GB | Noticeable loss | Tight memory constraints |

| Q2_K | 3.4 | ~2.8 GB | Significant loss | Extreme constraints only |

Quality degradation from quantization is not linear. Models typically maintain near-full quality down to Q5_K_M (5 to 6 bits), show modest degradation at Q4_K_M (4 to 5 bits), and then experience a steeper quality drop below 4 bits. The exact cliff depends on model architecture and size: larger models (70B+) tolerate aggressive quantization better than smaller models (3B) because they have more redundancy. Always benchmark your specific use case at multiple quantization levels rather than relying on general guidelines.

3. Ollama: Developer-Friendly Local Model Management

Ollama wraps llama.cpp (and other backends) in a user-friendly interface inspired by

Docker. Instead of downloading GGUF files manually and managing command-line flags, Ollama

provides a pull/run workflow that handles model downloads, GPU

detection, memory management, and API serving automatically. It supports macOS, Linux, and

Windows, and exposes an OpenAI-compatible API by default on port 11434.

# Install Ollama (macOS/Linux)

curl -fsSL https://ollama.com/install.sh | sh

# Pull and run a model

ollama pull llama3.2:3b

ollama run llama3.2:3b "Explain edge deployment in one paragraph."

# List available models

ollama list

# Run with a specific quantization

ollama pull llama3.2:3b-instruct-q5_K_M

ollama run llama3.2:3b-instruct-q5_K_M

pull/run commands mirror Docker semantics, and the quantization tag (q5_K_M) selects a specific quality/size tradeoff at download time.Custom Modelfiles

Ollama's Modelfile system allows you to create custom model configurations with specific system prompts, parameters, and templates. This is useful for packaging a fine-tuned or customized model as a reusable unit that team members can pull and run identically.

# Modelfile: a custom medical assistant configuration

FROM llama3.2:3b-instruct-q5_K_M

PARAMETER temperature 0.3

PARAMETER top_p 0.9

PARAMETER num_ctx 4096

PARAMETER stop "<|eot_id|>"

SYSTEM """You are a medical terminology assistant running on a hospital

workstation. You help clinicians look up drug interactions, medical

terminology, and clinical guidelines. You always include a disclaimer

that your outputs are for reference only and do not constitute medical

advice. All data stays on this device; no information is sent externally."""

TEMPLATE """{{ if .System }}<|start_header_id|>system<|end_header_id|>

{{ .System }}<|eot_id|>{{ end }}{{ if .Prompt }}<|start_header_id|>user<|end_header_id|>

{{ .Prompt }}<|eot_id|>{{ end }}<|start_header_id|>assistant<|end_header_id|>

{{ .Response }}<|eot_id|>"""

PARAMETER directives set a low temperature (0.3) for factual consistency, and the SYSTEM block injects a domain-specific persona with a mandatory disclaimer.# Build and run the custom model

ollama create medical-assistant -f Modelfile

ollama run medical-assistant "What are the contraindications for metformin?"

ollama create command packages the base model, parameters, and system prompt into a single named unit that any team member can launch with ollama run.Programmatic Access

This snippet shows how to query benchmark results programmatically through the API.

"""Using Ollama's API from Python (OpenAI-compatible endpoint)."""

from openai import OpenAI

# Ollama exposes an OpenAI-compatible API on localhost:11434

client = OpenAI(base_url="http://localhost:11434/v1", api_key="ollama")

response = client.chat.completions.create(

model="llama3.2:3b",

messages=[

{"role": "system", "content": "You are a helpful assistant."},

{"role": "user", "content": "What is quantization in LLMs?"},

],

temperature=0.7,

max_tokens=500,

)

print(response.choices[0].message.content)

# Streaming works identically to the OpenAI API

stream = client.chat.completions.create(

model="llama3.2:3b",

messages=[{"role": "user", "content": "Explain edge deployment."}],

stream=True,

)

for chunk in stream:

if chunk.choices[0].delta.content:

print(chunk.choices[0].delta.content, end="", flush=True)

4. MLX: Optimized Inference on Apple Silicon

Apple's MLX framework is designed specifically for Apple Silicon (M1 through M4 chips),

exploiting the unified memory architecture that allows the CPU, GPU, and Neural Engine to

share the same memory without copying. For Mac users, MLX often delivers better performance

than llama.cpp because it uses Metal shaders optimized for Apple's GPU architecture. The

companion library mlx-lm provides a high-level interface for text generation

with Hugging Face model compatibility.

# Install MLX and mlx-lm

pip install mlx mlx-lm

# Run a model directly from Hugging Face

mlx_lm.generate \

--model mlx-community/Llama-3.2-3B-Instruct-4bit \

--prompt "Explain the benefits of on-device inference." \

--max-tokens 200

# Convert a Hugging Face model to MLX format with quantization

mlx_lm.convert \

--hf-path meta-llama/Llama-3.2-3B-Instruct \

--mlx-path ./llama-3.2-3b-mlx-4bit \

--quantize \

--q-bits 4 \

--q-group-size 64

"""MLX text generation with streaming."""

from mlx_lm import load, generate

# Load a quantized model (downloads from HF if not cached)

model, tokenizer = load("mlx-community/Llama-3.2-3B-Instruct-4bit")

# Generate text

prompt = tokenizer.apply_chat_template(

[{"role": "user", "content": "What are the advantages of MLX?"}],

tokenize=False,

add_generation_prompt=True,

)

response = generate(

model,

tokenizer,

prompt=prompt,

max_tokens=300,

temp=0.7,

verbose=True, # Prints tokens/sec performance

)

print(response)

MLX's unified memory model means that a MacBook Pro with 36GB of RAM can run a 4-bit quantized 30B model entirely in memory without the CPU-to-GPU transfer bottleneck that limits performance on discrete GPU systems. For development and prototyping workflows on Apple hardware, MLX provides the fastest path from model selection to running inference.

5. ExecuTorch: PyTorch Models on Mobile and Edge

Meta's ExecuTorch is the production runtime for deploying PyTorch models on mobile phones, IoT devices, and other resource-constrained hardware. Unlike llama.cpp (which requires models in GGUF format) or MLX (which targets Apple Silicon), ExecuTorch takes standard PyTorch models and exports them to an optimized format (.pte) that runs on Android, iOS, and embedded Linux with hardware-specific acceleration. ExecuTorch supports Qualcomm Hexagon DSPs, Apple Core ML, MediaTek APUs, and ARM CPU backends.

"""Export a model for ExecuTorch deployment (simplified workflow)."""

import torch

from executorch.exir import to_edge

from transformers import AutoModelForCausalLM, AutoTokenizer

# Load a small model suitable for mobile

model_name = "microsoft/phi-2" # 2.7B parameters

model = AutoModelForCausalLM.from_pretrained(

model_name, torch_dtype=torch.float16

)

tokenizer = AutoTokenizer.from_pretrained(model_name)

# Trace the model for export

example_input = tokenizer("Hello", return_tensors="pt")

traced = torch.export.export(

model,

(example_input["input_ids"],),

dynamic_shapes={

"input_ids": {1: torch.export.Dim("seq_len", min=1, max=512)}

},

)

# Convert to ExecuTorch edge format

edge_program = to_edge(traced)

# Delegate to hardware-specific backends

# For Qualcomm: edge_program = edge_program.to_backend(QnnBackend())

# For CoreML: edge_program = edge_program.to_backend(CoreMLBackend())

# Export the final .pte file

et_program = edge_program.to_executorch()

with open("phi2_mobile.pte", "wb") as f:

f.write(et_program.buffer)

print("Exported model size:", len(et_program.buffer) / 1e6, "MB")

ExecuTorch's primary advantage over llama.cpp for mobile deployment is its integration with the PyTorch ecosystem. If your model is already in PyTorch (as most research models are), ExecuTorch provides a direct export path without format conversion. It also supports hardware-specific optimizations through a delegate system, where computation-heavy operations are offloaded to specialized accelerators (NPUs, DSPs) that llama.cpp cannot access.

The choice between llama.cpp, Ollama, MLX, and ExecuTorch depends on your target platform and deployment constraints. llama.cpp is the universal choice: it runs everywhere and supports the widest range of models. Ollama wraps llama.cpp for developer convenience and is ideal for local development and prototyping. MLX is the best option for Apple Silicon Macs, offering superior performance through Metal optimization. ExecuTorch is the right choice when you need to deploy on mobile phones or IoT devices with hardware-specific acceleration. Many production systems use multiple runtimes: Ollama for developer machines, ExecuTorch for the mobile app, and a cloud API as the fallback.

6. Battery and Thermal Constraints on Mobile

Running LLM inference on a mobile device introduces constraints that do not exist in server environments. Battery drain is the most visible: sustained LLM inference can consume 3 to 5 watts on a modern smartphone, draining the battery at a rate of roughly 1% per minute of continuous generation. Thermal throttling is equally important; most mobile SoCs reduce clock speeds after 30 to 60 seconds of sustained compute to prevent overheating, which degrades generation speed mid-response.

Practical mitigations include: (1) using speculative decoding with a tiny draft model to reduce the number of full-model forward passes, (2) capping generation length to prevent extended inference sessions, (3) batching requests when possible to amortize model loading overhead, (4) monitoring device temperature and gracefully degrading to shorter responses or cloud fallback when thermal limits approach, and (5) using the smallest model variant that meets quality requirements. The quality difference between a Q4_K_M and Q5_K_M quantization on a 3B model is often imperceptible to users, but the memory and power savings can extend battery life by 15 to 20%.

Lab: Quantization Quality vs. Latency Benchmark

Step 1: Set Up the Environment

This snippet installs the required dependencies and configures environment variables for the benchmark.

# Ensure Ollama is installed and running

ollama --version

# Pull two quantization levels of the same model

ollama pull llama3.2:3b-instruct-q4_K_M

ollama pull llama3.2:3b-instruct-q8_0

Step 2: Run the Benchmark

This snippet executes the benchmark suite and collects the results.

"""Benchmark two quantization levels on the same prompts."""

import time

import json

from openai import OpenAI

client = OpenAI(base_url="http://localhost:11434/v1", api_key="ollama")

PROMPTS = [

"Explain the concept of quantization in neural networks in 3 sentences.",

"Write a Python function that computes the Fibonacci sequence iteratively.",

"Summarize the key differences between TCP and UDP protocols.",

"What are the main causes of the French Revolution? List 5 factors.",

"Translate this to formal English: 'gonna grab some food brb'",

"Write a SQL query to find the top 10 customers by total order value.",

"Explain photosynthesis to a 10-year-old in simple terms.",

"What are three common logical fallacies? Give an example of each.",

"Write a bash one-liner to count the number of .py files recursively.",

"Compare and contrast microservices and monolithic architectures.",

]

MODELS = ["llama3.2:3b-instruct-q4_K_M", "llama3.2:3b-instruct-q8_0"]

def benchmark_model(model_name: str, prompts: list[str]) -> dict:

results = []

for prompt in prompts:

start = time.perf_counter()

response = client.chat.completions.create(

model=model_name,

messages=[{"role": "user", "content": prompt}],

max_tokens=300,

temperature=0.0, # Deterministic for comparison

)

elapsed = time.perf_counter() - start

output = response.choices[0].message.content

token_count = response.usage.completion_tokens

results.append({

"prompt": prompt[:60],

"output": output,

"tokens": token_count,

"time_s": round(elapsed, 2),

"tok_per_s": round(token_count / elapsed, 1),

})

avg_tps = sum(r["tok_per_s"] for r in results) / len(results)

return {

"model": model_name,

"avg_tokens_per_sec": avg_tps,

"results": results,

}

# Run benchmarks

for model in MODELS:

print(f"\nBenchmarking {model}...")

report = benchmark_model(model, PROMPTS)

print(f" Average: {report['avg_tokens_per_sec']:.1f} tokens/sec")

with open(f"benchmark_{model.replace(':', '_')}.json", "w") as f:

json.dump(report, f, indent=2)

Step 3: Evaluate Quality

Compare the outputs from both quantization levels side by side. For each of the 10 prompts, rate the Q4_K_M output on a 1 to 5 scale relative to the Q8_0 output: 5 means identical quality, 4 means minor differences that do not affect usefulness, 3 means noticeable degradation, 2 means significant quality loss, and 1 means the output is unusable. Compute the average quality score and report it alongside the latency numbers. Typical results for a 3B model show average quality scores of 4.2 to 4.7, confirming that Q4_K_M is viable for most applications.

Extend the lab benchmark to include Q5_K_M as a third quantization level. Plot tokens/sec vs. quality score for all three levels. Is Q5_K_M the best compromise, or does Q4_K_M offer sufficient quality at meaningfully better speed?

Answer Sketch

Q5_K_M typically sits midway between Q4_K_M and Q8_0 on both metrics. The quality difference between Q4_K_M and Q5_K_M is usually small (0.1 to 0.3 points on the 5-point scale), while the speed difference is also modest (5 to 15% faster for Q4_K_M). For applications where every millisecond matters (autocomplete, real-time suggestions), Q4_K_M is preferred. For applications where quality is paramount but memory is limited (medical reference, legal document review), Q5_K_M provides a better balance.

Design a tiered inference system for a mobile application that uses a Q4_K_M model on-device for simple queries and routes complex queries to a cloud API. Define the routing criteria, implement a complexity classifier, and measure the cost savings compared to sending all queries to the cloud.

Answer Sketch

Route queries to the on-device model when: (1) the query is under 50 tokens, (2) the task is classification, extraction, or short-form generation, and (3) the device battery is above 20%. Route to the cloud when: the query requires multi-step reasoning, long-form generation (over 500 tokens), or references context beyond the on-device model's knowledge. A simple keyword/length classifier can achieve 85%+ routing accuracy. At 70% on-device routing, total API costs drop by approximately 70%, with user-perceived quality dropping less than 5% (measured by blind comparison).

If you have access to an Apple Silicon Mac, benchmark the same model using both MLX and llama.cpp. Compare tokens/sec, time to first token, memory usage, and power consumption (using the powermetrics tool). Which runtime is faster for your hardware?

Answer Sketch

On M1/M2 Macs, MLX typically outperforms llama.cpp by 10 to 30% in tokens/sec for 4-bit quantized models, with the gap widening for larger models that benefit more from Metal's unified memory access patterns. llama.cpp may show better time-to-first-token for small models due to lower initialization overhead. Power consumption is similar because both frameworks saturate the same GPU cores; the difference in tokens/sec means MLX completes the same work using less total energy per response.

- What is the key architectural advantage of llama.cpp that makes it run on such a wide range of hardware (CPUs, GPUs, mobile devices)?

- Ollama exposes an OpenAI-compatible API. Why is API compatibility important for edge deployment, and how does it simplify the transition from cloud to local inference?

- MLX exploits Apple Silicon's unified memory architecture. Explain why this gives MLX a performance advantage over llama.cpp on Mac hardware for large models.

- In the quantization benchmark lab, you compared Q4_K_M and Q8_0 variants. What quality/latency trade-offs would you expect, and how would you decide which to deploy in production?

- Edge deployment brings LLM inference to devices where cloud connectivity is unreliable, latency-sensitive, or privacy-constrained.

- llama.cpp provides universal C/C++ inference with GGUF quantization, running models from laptops to Raspberry Pi devices.

- Ollama wraps llama.cpp in a developer-friendly interface with model management, an OpenAI-compatible API, and one-command setup.

- MLX delivers optimized inference on Apple Silicon, leveraging the unified memory architecture for efficient model loading.

- ExecuTorch extends PyTorch to mobile and edge devices with ahead-of-time compilation and hardware-specific delegates.

- Battery and thermal constraints on mobile devices require adaptive inference strategies that reduce model activity when resources are scarce.

What Comes Next

With edge deployment patterns established, the next part of the book addresses Safety & Strategy, beginning with Chapter 32: Safety, Ethics, and Regulation. Privacy and data sovereignty requirements for on-device models connect directly to the regulatory frameworks covered there.

Gerganov, G. (2023). "llama.cpp: LLM Inference in C/C++." github.com/ggerganov/llama.cpp

Ollama. (2024). "Ollama: Get Up and Running with Large Language Models." ollama.com

Apple Machine Learning Research. (2024). "MLX: An Array Framework for Apple Silicon." github.com/ml-explore/mlx

Meta. (2024). "ExecuTorch: End-to-End Solution for Enabling On-Device AI." pytorch.org/executorch

Dettmers, T., Pagnoni, A., Holtzman, A., Zettlemoyer, L. (2023). "QLoRA: Efficient Finetuning of Quantized Language Models." arXiv:2305.14314

Frantar, E., Ashkboos, S., Hoefler, T., Alistarh, D. (2023). "GPTQ: Accurate Post-Training Quantization for Generative Pre-Trained Transformers." arXiv:2210.17323

Lin, J., Tang, J., Tang, H., et al. (2024). "AWQ: Activation-aware Weight Quantization for LLM Compression and Acceleration." arXiv:2306.00978