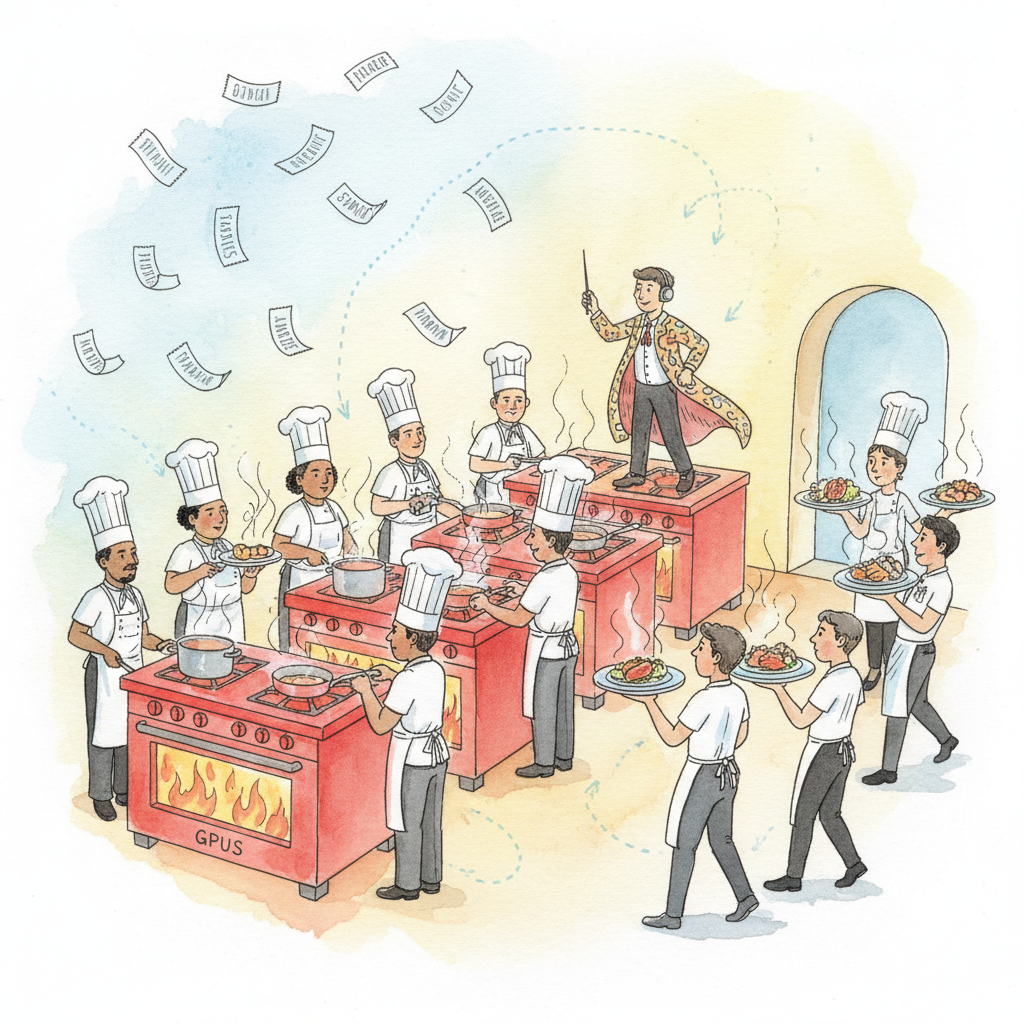

Serving LLMs in production requires specialized infrastructure that goes far beyond a simple Flask endpoint. The unique characteristics of autoregressive generation (sequential token output, KV-cache memory management, variable-length requests) demand purpose-built serving engines that maximize GPU utilization through techniques like continuous batching, paged attention, and speculative decoding.

This appendix covers the leading open-source inference servers. vLLM pioneered PagedAttention for efficient memory management and provides an OpenAI-compatible API server with continuous batching. Text Generation Inference (TGI), from HuggingFace, offers a production-ready Rust/Python server with built-in quantization, watermarking, and token streaming. SGLang introduces RadixAttention for prefix caching and a frontend language for structured generation (JSON schema, regex constraints). The appendix also covers quantization formats (GPTQ, AWQ, GGUF) and scaling strategies for multi-GPU and multi-node deployments.

This material is essential for infrastructure engineers, MLOps practitioners, and anyone deploying open-weight models at scale. If you are using hosted APIs (OpenAI, Anthropic, Google) exclusively, you can defer this appendix, but understanding serving internals helps you evaluate cost, latency, and throughput tradeoffs.

The optimization techniques underlying these servers are explained in Chapter 9 (Inference Optimization), which covers KV-cache, quantization theory, and batching strategies at a conceptual level. For production deployment architecture including load balancing, canary rollouts, and monitoring, see Chapter 31 (Production Engineering). Containerizing these servers is covered in Appendix U (Docker).

Read Chapter 9 (Inference Optimization) to understand the KV-cache, attention computation costs, and quantization fundamentals that these serving engines optimize. Familiarity with Linux command-line tools, GPU drivers (CUDA), and basic networking (ports, HTTP APIs) is assumed for hands-on deployment.

Use this appendix when you need to self-host an open-weight LLM for production inference. Choose vLLM for the broadest model compatibility and the simplest OpenAI-compatible drop-in API. Choose TGI when you want tight HuggingFace Hub integration, built-in safety features (watermarking, input sanitization), or when deploying on HuggingFace Inference Endpoints. Choose SGLang when you need structured output (constrained decoding to JSON or regex) or want the best prefix caching performance. If you are deploying via containers, pair this appendix with Appendix U.