"A prototype that works on your laptop is a hypothesis. An MVP that works for ten real users is evidence."

Compass, Evidence Hungry AI Agent

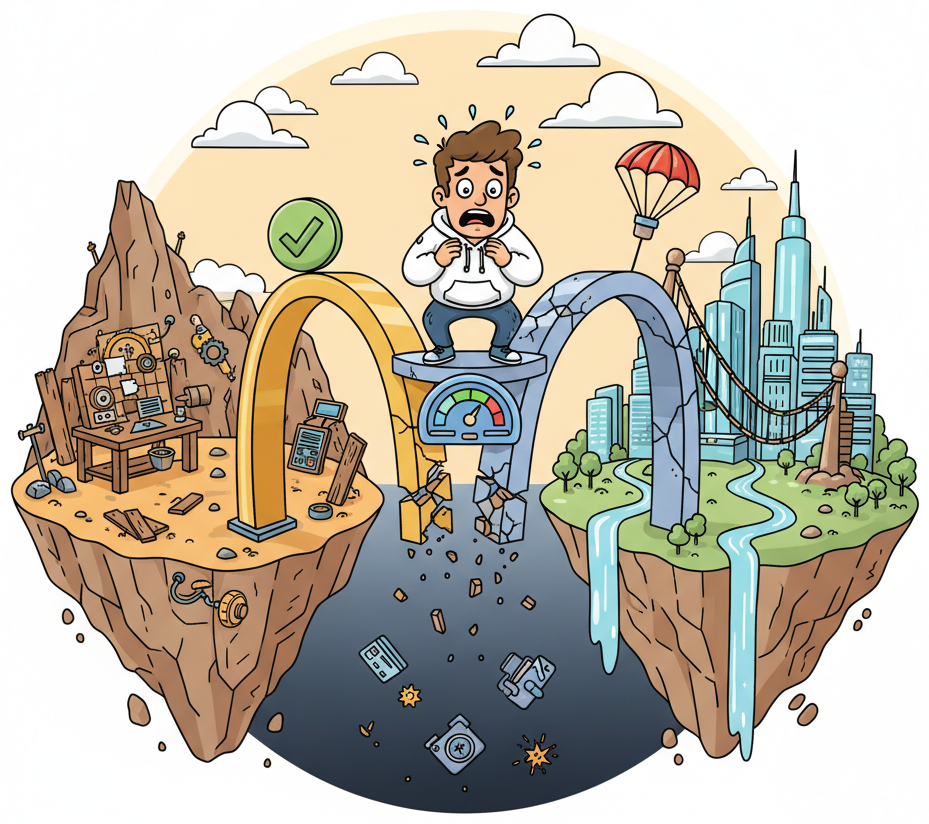

A working prototype is not a product. Your prototype passes your eval suite on your laptop, with your test data, under your supervision. An MVP (Minimum Viable Product) faces real users who send unexpected inputs at unpredictable volumes, expect sub-second responses, and will not forgive silent failures. The gap between these two states is where most AI projects stall or fail. This section provides a concrete checklist for crossing that gap: quality gates tailored to your product's role type, an evaluation contract that defines "good enough" before users ever see the system, monitoring and fallback strategies that keep you safe when things go wrong, and feedback loops that turn user corrections into evaluation gold. By the end, you will know exactly when your prototype is ready to graduate and what infrastructure must be in place before you flip the switch.

Prerequisites

This section builds on the Founder's Prototype Loop from Section 37.2, the evaluation framework from Chapter 29, and the observability tooling from Chapter 30. Familiarity with the Role Canvas from Chapter 36 is recommended.

1. When Is a Prototype Ready to Graduate?

The temptation is strong: your prototype handles the demo cases, your stakeholder nodded approvingly, and you want to ship. But a prototype that works in controlled conditions is a hypothesis about user value, not proof of it. Before graduating to MVP, your prototype must pass three categories of readiness checks.

The Prototype That Shipped Too Early

Who: A product lead at a mid-stage B2B SaaS company building an AI contract reviewer.

Situation: The prototype correctly extracted key clauses from 90% of test contracts and the CEO wanted to ship immediately to close a partnership deal.

Problem: The 10% failure rate was not random. The model failed systematically on contracts with nested sub-clauses, non-English terms, and unusual formatting, exactly the contracts that legal teams most needed help with.

Dilemma: Ship now to capture the partnership (risking trust erosion if failures hit the partner's most complex contracts), or delay two months to build guardrails and fallback workflows.

Decision: They chose a middle path: ship with a human-in-the-loop fallback that flagged low-confidence extractions for manual review, plus a prominent "beta" label.

How: Using a confidence threshold calibrated on their eval set, they routed 25% of contracts to human review. The confidence model added one week of engineering work.

Result: The partner signed. Over three months, the human review queue shrank from 25% to 8% as the team used the flagged cases to improve prompts and add structured extraction rules.

Lesson: The fastest path from prototype to MVP is not removing all failure modes; it is building a graceful fallback for the failure modes you cannot yet fix.

Functional readiness. The system produces correct, useful outputs for the core use case. Not every edge case, not every language, not every user persona. Just the primary flow, validated against a golden evaluation set (see Section 2 below).

Operational readiness. You can observe the system in production. You know when it fails, how long requests take, how much each call costs, and where tokens are being spent. Without observability, shipping an MVP is flying blind.

Recovery readiness. When the model is slow, wrong, or unavailable, the system degrades gracefully rather than crashing or producing dangerous outputs. You have fallback paths and the user sees a sensible message rather than a stack trace or a hallucinated answer.

The prototype proves the concept; the MVP proves the operations. Most AI prototype failures are not about model quality. They are about everything around the model: latency spikes, cost overruns, missing error handling, silent hallucinations that nobody catches, and the absence of any mechanism for users to say "this is wrong." The transition from prototype to MVP is primarily an engineering and observability challenge, not a prompt engineering one.

2. Quality Gates by Role Type

Not every AI product needs the same quality gates. The Role Canvas from Chapter 36 defined several role types for AI systems: Drafter, Classifier, Router, Analyst, and Agent. Each role type has a different failure profile, which means each needs different MVP gates.

| Role Type | Primary Risk | Must-Pass Gate | Acceptable Threshold |

|---|---|---|---|

| Drafter | Hallucinated facts, wrong tone, policy violations | Human review rate on golden set; factual accuracy score | ≥90% factual accuracy; 0% policy violations |

| Classifier | Misclassification leading to wrong routing or wrong action | Precision and recall on each class in golden set | ≥95% on high-stakes classes; ≥85% overall |

| Router | Sending requests to the wrong handler or model | Routing accuracy; fallback trigger rate | ≥92% correct routing; <5% fallback rate |

| Analyst | Incorrect calculations, misleading summaries | Numerical accuracy on test queries; citation correctness | 100% numerical accuracy; ≥90% citation match |

| Agent | Wrong tool calls, infinite loops, unintended side effects | Task completion rate; safety boundary compliance | ≥85% task completion; 0% boundary violations |

These thresholds are starting points, not universal standards. Your domain determines the acceptable error rate. A medical triage classifier needs higher precision than a movie recommendation classifier. The critical discipline is writing the thresholds down before you start testing, so you cannot rationalize away poor results after the fact.

3. The MVP Evaluation Contract

An evaluation contract is a written agreement (with yourself, your team, or your stakeholder) that specifies the minimum acceptable performance on your golden evaluation set before the system is exposed to real users. It makes the go/no-go decision objective rather than subjective.

The evaluation contract has four components:

- Golden set size. A minimum number of test cases that cover the primary use case, known edge cases, and adversarial inputs. For most MVPs, 50 to 200 golden cases is a practical starting range. As discussed in Chapter 29, the golden set must be curated by humans, not generated by the model under test.

- Scoring rubric. Each golden case includes expected outputs and a scoring method (exact match, semantic similarity, human judgment, or LLM-as-judge with a calibrated rubric).

- Pass threshold. The minimum score required for the system to graduate. This is the number from the quality gates table above, tailored to your domain.

- Regression baseline. After passing the initial gate, every subsequent change (prompt update, model swap, architecture change) must re-run the golden set and meet or exceed the baseline score. No regressions allowed.

Who: A prompt engineer on a customer support team at an insurance company, responsible for an AI Drafter that generates reply suggestions.

Situation: The team's evaluation contract stated: "The system must score at least 90% on factual accuracy across 100 golden cases, with zero policy violations, before any user sees a generated reply. After launch, every prompt change must re-run the golden set and maintain the 90% baseline."

Problem: A teammate proposed a prompt tweak that noticeably improved the tone and empathy of replies. The team was enthusiastic, but the golden set re-run showed factual accuracy had dropped from 91% to 87%.

Decision: The evaluation contract said no. The team held the tweak back for further iteration rather than shipping the regression, despite internal pressure ("it sounds so much better!").

Result: After two more iterations, the teammate found a version that preserved the improved tone while maintaining 92% accuracy. The contract prevented a quality regression from reaching users.

Lesson: An explicit evaluation contract depersonalizes quality decisions, replacing subjective debates with a measurable threshold that the whole team agreed to in advance.

4. Monitoring Readiness

Before shipping your MVP, you need observability infrastructure in place. You cannot fix what you cannot see. The observability stack from Chapter 30 provides the full picture; here is the minimum viable monitoring checklist for an MVP launch.

Traces. Every LLM call must be logged with: the prompt sent, the response received, latency, token counts (input and output), model version, and a request ID that can be correlated with user-facing interactions. Tools like LangSmith, Langfuse, or OpenTelemetry with custom spans handle this.

Metrics. At minimum, track these four numbers in a dashboard updated in near-real-time:

- p50 and p95 latency per endpoint. If your p95 crosses a threshold (say, 5 seconds), you need to know immediately.

- Error rate. What percentage of requests result in a model error, timeout, or application exception?

- Cost per request. Token usage multiplied by model pricing, aggregated hourly and daily. A sudden cost spike means something changed.

- Feedback rate. What percentage of users interact with the feedback mechanism (thumbs up/down, correction, flag)? A drop in feedback rate may indicate the mechanism is broken or hidden.

Alerts. Configure alerts for three conditions before launch:

- Error rate exceeds 5% over a 10-minute window.

- p95 latency exceeds your SLA target for more than 5 minutes.

- Daily cost exceeds 150% of your budgeted amount.

You do not need a full Grafana/Prometheus stack on day one. A structured JSON log file, a simple script that parses it for the four metrics above, and an email alert on threshold breach will carry you through the first weeks. Sophistication should follow traffic, not precede it.

5. Fallback and Degradation Strategies

Models are external dependencies. They go down, they slow down, they change behavior after provider updates. Your MVP must handle three failure modes gracefully.

Model unavailable (API error or timeout). The simplest fallback: return a pre-written human response acknowledging the issue and offering an alternative channel. Never show users a raw error message or an empty response. For non-conversational systems (classifiers, routers), queue the request for retry and return a "processing" status.

Model too slow (latency exceeds threshold). Implement a timeout with a fallback path. If the primary model does not respond within your latency budget, either route to a faster (smaller) model or return a cached response if one is available. As covered in Chapter 31, a tiered model strategy (large model for quality, small model for speed) gives you a natural degradation ladder.

Model produces low-confidence or unsafe output. If your system includes a confidence score or a safety classifier, define what happens when the score falls below your threshold. Options include: escalating to a human reviewer, returning a hedged response ("I'm not confident in this answer; here's what I found, but please verify"), or withholding the response entirely with a redirect to human support.

| Failure Mode | Detection | Fallback Action | User Experience |

|---|---|---|---|

| API timeout | Request exceeds timeout threshold | Retry once, then return canned response | "Our system is momentarily busy. Please try again." |

| Rate limit hit | HTTP 429 from provider | Route to secondary model or queue | Slightly slower response; no visible error |

| Low confidence | Confidence score < threshold | Escalate to human or return hedged answer | "Here is a preliminary answer; a specialist will follow up." |

| Safety filter triggered | Output flagged by guardrail | Suppress output; log for review | "I can't help with that request. Here's what I can do." |

| Provider outage | Multiple consecutive failures | Switch to backup provider or static mode | Reduced functionality with clear messaging |

6. User Feedback Loops

The most valuable data your MVP will generate is not model outputs; it is user corrections. Every time a user flags a bad response, corrects a classification, or rephrases a query after an unsatisfying answer, they are writing a new golden test case for you. Building a feedback mechanism is not optional for an MVP; it is the primary learning channel.

Three levels of feedback collection, from simplest to richest:

- Binary signal. Thumbs up / thumbs down on each response. Cheap to implement, cheap for users to provide, but low information density. You know something was wrong but not what.

- Categorical signal. After a thumbs-down, offer a short menu: "Incorrect facts," "Wrong tone," "Too long," "Didn't understand my question," "Other." This costs one extra click and dramatically increases diagnostic value.

- Correction signal. Let the user edit the response or provide the correct answer. This is the gold standard because each correction is a potential golden test case. The cost: users rarely bother unless the interface makes it effortless.

The following code example shows a minimal feedback collection endpoint that logs user corrections and can feed them into your golden evaluation set.

"""Minimal feedback collection endpoint for an AI MVP.

Logs user corrections alongside the original model output,

creating a pipeline from user feedback to golden eval cases.

"""

import json

import logging

from datetime import datetime, timezone

from pathlib import Path

from dataclasses import dataclass, asdict

from typing import Optional

logger = logging.getLogger(__name__)

@dataclass

class FeedbackRecord:

"""A single piece of user feedback on a model response."""

request_id: str

timestamp: str

user_input: str

model_output: str

rating: str # "positive", "negative"

category: Optional[str] # "incorrect_facts", "wrong_tone", etc.

user_correction: Optional[str] # The corrected output, if provided

metadata: Optional[dict] # Session info, model version, etc.

class FeedbackCollector:

"""Collects and stores user feedback, with export to golden eval format.

Args:

storage_path: Directory where feedback JSONL files are written.

golden_export_path: Path to the golden eval JSONL file for new cases.

"""

def __init__(

self,

storage_path: str = "./feedback",

golden_export_path: str = "./ieb/eval/golden_from_feedback.jsonl",

):

self.storage_path = Path(storage_path)

self.storage_path.mkdir(parents=True, exist_ok=True)

self.golden_export_path = Path(golden_export_path)

self.golden_export_path.parent.mkdir(parents=True, exist_ok=True)

def record_feedback(self, feedback: FeedbackRecord) -> None:

"""Append a feedback record to the daily log file."""

today = datetime.now(timezone.utc).strftime("%Y-%m-%d")

log_file = self.storage_path / f"feedback_{today}.jsonl"

with open(log_file, "a", encoding="utf-8") as f:

f.write(json.dumps(asdict(feedback), ensure_ascii=False) + "\n")

logger.info(

"Feedback recorded: request_id=%s rating=%s",

feedback.request_id, feedback.rating,

)

# If the user provided a correction, queue it for golden set review

if feedback.user_correction:

self._queue_for_golden_set(feedback)

def _queue_for_golden_set(self, feedback: FeedbackRecord) -> None:

"""Write user corrections as candidate golden eval cases.

These candidates should be reviewed by a human before being

promoted to the official golden set.

"""

candidate = {

"input": feedback.user_input,

"expected_output": feedback.user_correction,

"source": "user_correction",

"request_id": feedback.request_id,

"original_model_output": feedback.model_output,

"timestamp": feedback.timestamp,

"status": "pending_review",

}

with open(self.golden_export_path, "a", encoding="utf-8") as f:

f.write(json.dumps(candidate, ensure_ascii=False) + "\n")

logger.info(

"Golden set candidate queued: request_id=%s", feedback.request_id

)

def get_daily_stats(self, date_str: str) -> dict:

"""Return basic feedback statistics for a given date.

Args:

date_str: Date in YYYY-MM-DD format.

Returns:

Dictionary with counts of positive, negative, and correction feedback.

"""

log_file = self.storage_path / f"feedback_{date_str}.jsonl"

stats = {"positive": 0, "negative": 0, "corrections": 0, "total": 0}

if not log_file.exists():

return stats

with open(log_file, "r", encoding="utf-8") as f:

for line in f:

record = json.loads(line)

stats["total"] += 1

if record["rating"] == "positive":

stats["positive"] += 1

else:

stats["negative"] += 1

if record.get("user_correction"):

stats["corrections"] += 1

return stats

# --- Example usage ---

if __name__ == "__main__":

collector = FeedbackCollector()

# Simulate a user correction

feedback = FeedbackRecord(

request_id="req_abc123",

timestamp=datetime.now(timezone.utc).isoformat(),

user_input="What is the return policy for electronics?",

model_output="All electronics can be returned within 90 days.",

rating="negative",

category="incorrect_facts",

user_correction="Electronics can be returned within 30 days with "

"original packaging. Opened software is non-refundable.",

metadata={"model": "gpt-4o-mini", "session_id": "sess_xyz"},

)

collector.record_feedback(feedback)

# Check daily stats

today = datetime.now(timezone.utc).strftime("%Y-%m-%d")

print(collector.get_daily_stats(today))get_daily_stats method provides a quick health check on user satisfaction trends.Research on recommendation systems at Netflix and Spotify consistently shows that the first 1,000 pieces of explicit user feedback improve model quality more than the next 100,000 implicit signals (clicks, dwell time). The same pattern applies to LLM products: a single user correction that says "the refund policy is 30 days, not 90" is worth more than a thousand thumbs-up clicks. This is why correction-level feedback, though harder to collect, is disproportionately valuable for your golden eval set.

7. Internal Prototype vs. External MVP

The distinction is worth stating explicitly, because teams often blur the line and ship a prototype while calling it an MVP.

An internal prototype is a system where you control the inputs. You run your eval suite, you test with your scenarios, you know the boundaries. The audience is you, your team, and perhaps a friendly stakeholder who understands the limitations.

An external MVP is a system where users control the inputs. They will send queries you never imagined. They will paste in long documents when you expected short questions. They will use languages, slang, and abbreviations outside your test data. They will retry failed requests ten times in rapid succession. They will screenshot your worst outputs and post them publicly.

The gap between these two states is bridged by three activities:

- Adversarial testing. Before launch, have someone outside the development team try to break the system. Give them explicit permission to be creative. Their failure cases become golden eval cases.

- Load testing. Simulate realistic traffic patterns. Not just average load, but peak load. What happens when 50 users submit queries in the same minute? Do you hit rate limits? Does latency degrade gracefully?

- Cost projection. Multiply your average cost-per-request by your expected daily volume, then multiply by three for safety margin. If the resulting monthly bill exceeds your budget, you need a cheaper model tier or a caching layer before launch.

8. Architecture Hardening

Your prototype likely makes a single LLM call per request with no error handling, no caching, and no cost controls. Production requires several hardening layers, each covered in depth in Chapter 31. Here is the minimum hardening checklist for an MVP.

Retry logic with exponential backoff. When the model API returns a transient error (HTTP 429, 500, 503), retry with increasing delays: 1 second, 2 seconds, 4 seconds, then fail gracefully. Never retry indefinitely; cap at 3 attempts.

Rate limiting on your own API. Protect yourself from both abuse and accidental overuse. A simple per-user, per-minute rate limit prevents a single user (or a misbehaving integration) from consuming your entire token budget.

Response caching. If the same query (or a semantically equivalent query) arrives multiple times, consider serving a cached response instead of making a new LLM call. Even a short-lived cache (5 minutes) can dramatically reduce costs for high-traffic systems. Be mindful: caching is appropriate for factual lookups but dangerous for personalized or time-sensitive responses.

Cost controls. Set a hard daily spending cap with your LLM provider. Most providers support usage limits or billing alerts. Additionally, track token usage per request in your application code so you can identify expensive patterns (long system prompts, unnecessary context stuffing) and optimize them.

Input validation and truncation. Set maximum input lengths. If a user pastes a 50,000-token document into a field designed for short questions, truncate gracefully and inform the user rather than sending the full payload to the model (and paying for all those tokens).

A common pattern: the prototype costs $0.003 per request because you tested with short inputs against a small model. In production, real user inputs are 3x longer, you added a verbose system prompt, and you switched to a larger model for quality. Now each request costs $0.04. At 10,000 requests per day, that is $400/day instead of the $30/day you budgeted. Architecture hardening (caching, input truncation, model routing) is not a nice-to-have; it is a financial survival mechanism.

9. The MVP Launch Checklist

Bringing it all together, here is a concrete checklist to review before your first external user touches the system. Not every item is required for every product, but each "no" should be a deliberate decision with a documented rationale, not an oversight.

- Golden eval set contains at least 50 cases covering core scenarios and known edge cases.

- Evaluation contract is written, with explicit pass thresholds per metric.

- System meets all pass thresholds on the current golden set.

- Traces are being captured for every LLM call (prompt, response, latency, tokens, cost).

- Dashboard shows the four core metrics: latency, error rate, cost, feedback rate.

- Alerts are configured for error rate spike, latency breach, and cost overrun.

- Fallback behavior is defined and tested for: API timeout, rate limit, low confidence, provider outage.

- User feedback mechanism is live (at minimum, thumbs up/down).

- Feedback pipeline routes corrections to candidate golden eval cases.

- Retry logic with exponential backoff is implemented.

- Rate limiting is active on your own API.

- Input validation truncates or rejects excessively long inputs.

- Daily cost cap is set with the LLM provider.

- Adversarial testing has been performed by someone outside the dev team.

- Load testing has verified behavior under expected peak traffic.

- Different role types need different quality gates. A Drafter MVP needs factual accuracy checks; a Classifier needs per-class precision and recall; an Agent needs boundary-violation testing. Use the Role Canvas from Chapter 36 to identify your role type, then apply the corresponding gate.

- Write the evaluation contract before testing, not after. Define minimum acceptable performance on your golden set as an explicit, written commitment. This prevents rationalizing poor results under deadline pressure.

- Monitoring is a prerequisite, not a follow-up. Traces, metrics, and alerts must be live before the first external user arrives. You cannot improve what you cannot measure, and you cannot fix what you cannot see.

- Graceful degradation is non-negotiable. Models will be slow, wrong, or unavailable. Every failure mode needs a planned fallback that protects user experience and system safety.

- User corrections are your most valuable data. Build feedback loops that capture not just satisfaction signals but actual corrections, and route those corrections into your evaluation pipeline.

- Architecture hardening prevents cost surprises. Retry logic, rate limiting, caching, and input validation are the minimum infrastructure layer between your prototype and a production-viable system.

What Comes Next

This section concludes Chapter 37: Building and Steering AI Products. You now have the tools to move from idea (Chapter 36) through prototype (Section 37.2) to a production-ready MVP. In Chapter 38: Shipping and Scaling AI Products, you will learn what happens after launch: the economics of running an AI product at scale, strategies for avoiding provider lock-in, and the capstone lab where you ship a complete AI product from prompt to production.

Show Answer

Show Answer

Show Answer

Bibliography

Ries, E. (2011). The Lean Startup: How Today's Entrepreneurs Use Continuous Innovation to Create Radically Successful Businesses. Crown Business.

Shankar, V., Garcia, R., Hellerstein, J. M., et al. (2024). "Who Validates the Validators? Aligning LLM-Assisted Evaluation of LLM Outputs with Human Preferences." arXiv:2404.12272

Langfuse Team. (2024). "Open Source LLM Observability and Evaluation." langfuse.com

Nygard, M. T. (2018). Release It! Design and Deploy Production-Ready Software. 2nd ed. Pragmatic Bookshelf.