"The art of prompting is less about telling a machine what to do and more about learning what it already knows how to do, if only you ask the right way."

Prompt, Silver-Tongued AI Agent

Chapter Overview

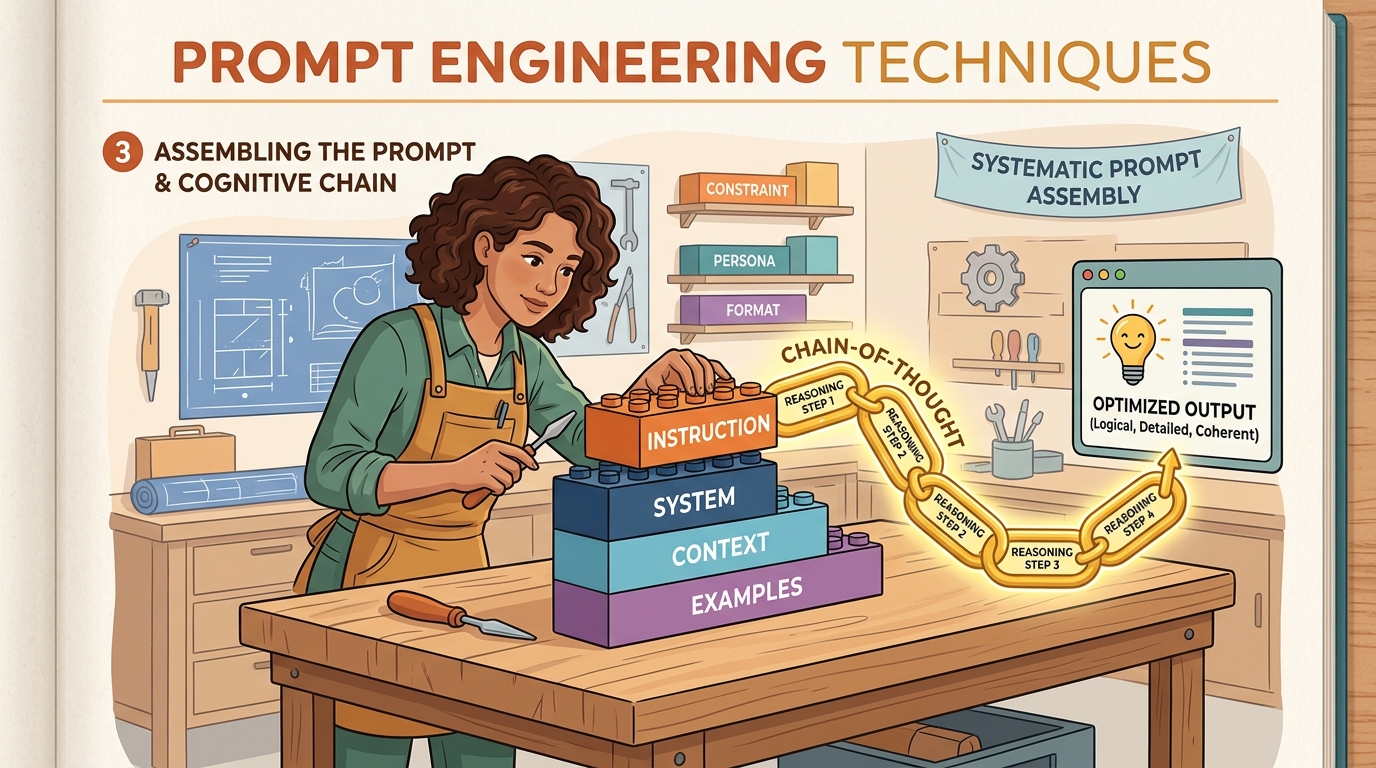

Prompting is programming with natural language. Every interaction with a large language model begins with a prompt, and the quality of that prompt determines the quality of the output. Yet most practitioners treat prompt engineering as an ad hoc trial-and-error process rather than a systematic discipline. This chapter changes that by presenting prompt engineering as a structured craft with well-defined techniques, measurable outcomes, and principled optimization strategies.

We begin with the foundational techniques: zero-shot and few-shot prompting, role assignment, system prompt design, and template construction. Next, we explore reasoning strategies that unlock the model's ability to solve complex problems: chain-of-thought prompting, self-consistency, tree-of-thought exploration, and the ReAct framework that interleaves reasoning with action. The third section covers advanced patterns including self-reflection loops, meta-prompting, prompt chaining, and automated prompt optimization with DSPy. Finally, we address the critical topics of prompt security and optimization: injection attacks, defense strategies, structured output enforcement, prompt compression, and systematic testing.

By the end of this chapter, you will have a practical toolkit for designing, composing, and securing prompts across a wide range of applications, from simple classification tasks to complex multi-step reasoning pipelines.

Prompt engineering is the most accessible and often the most cost-effective way to improve LLM output quality. The techniques here, including few-shot prompting, chain-of-thought, and structured output generation, apply directly to RAG systems (Chapter 20), agents (Chapter 22), and evaluation (Chapter 29).

Learning Objectives

- Design effective zero-shot, few-shot, and role-based prompts with measurable quality improvements

- Construct system prompts and prompt templates with variable injection for production applications

- Implement chain-of-thought, self-consistency, and tree-of-thought reasoning strategies

- Apply the ReAct framework to interleave reasoning with external tool use

- Build self-reflection and iterative refinement loops that improve output quality across multiple passes

- Use meta-prompting and prompt chaining to decompose complex tasks into manageable sub-tasks

- Identify and defend against prompt injection attacks (direct, indirect, and jailbreak variants)

- Enforce structured output with JSON mode, Pydantic models, and the Instructor library (covered in depth in Section 10.2; this chapter addresses the security and reliability dimensions of structured output)

- Apply automated prompt optimization using DSPy, OPRO, and prompt compression techniques like LLMLingua

- Implement context engineering with MCP (Model Context Protocol) for dynamic context assembly in production applications

- Design prompt testing suites with regression tests, A/B experiments, and version control

Prerequisites

- Chapter 05: Decoding Strategies (temperature, sampling, how generation works)

- Chapter 10: Working with LLM APIs (API calls, message formats, parameter tuning)

- Basic Python programming and familiarity with the OpenAI or Anthropic client libraries

- Conceptual understanding of how transformer models process and generate text

Sections

- 11.1 Foundational Prompt Design Zero-shot, one-shot, and few-shot prompting. System prompts and role assignment. Instruction clarity, constraints, output format specification. Prompt templates and variable injection. Handling edge cases: refusals, hallucinations, verbosity. Lab: iteratively refine prompts for a classification task and measure accuracy improvements.

- 11.2 Chain-of-Thought & Reasoning Techniques Chain-of-Thought (CoT) prompting and its variants. Self-consistency with majority voting. Tree-of-Thought (ToT) for structured exploration with backtracking. Step-back prompting. ReAct: interleaving reasoning and action. Lab: implement CoT, self-consistency, and ToT for math reasoning and compare accuracy.

- 11.3 Advanced Prompt Patterns Self-reflection and iterative refinement loops (generate, critique, revise). Meta-prompting and prompt chaining for complex workflows. Constitutional AI-style self-checks. Reflexion with memory-augmented self-reflection. Automated prompt optimization with DSPy and OPRO. Lab: build a reflection loop for code generation and measure pass@1 improvement.

- 11.4 Prompt Security & Optimization Prompt injection attacks (direct, indirect, jailbreaks). Defense strategies and input sanitization. JSON mode and schema enforcement. Pydantic models with the Instructor library. Prompt compression and token optimization. Prompt versioning, A/B testing, and regression testing. Lab: use Instructor + Pydantic to extract structured data; then use DSPy to auto-optimize a multi-step prompt pipeline.

- 11.5 Prompting Reasoning & Multimodal Models Prompting strategies for reasoning models (o1, o3, DeepSeek-R1), extended thinking and budget tokens, multimodal prompting with images, audio, and video inputs, and adapting prompt engineering techniques for next-generation model capabilities.

- 11.6 Automatic Prompt & Context Engineering Programmatic prompt optimization with DSPy (MIPROv2, BootstrapFewShot), OPRO, TextGrad, and EvoPrompt. Prompt compression with LLMLingua and LongLLMLingua. Context engineering with MCP and dynamic context assembly. When to use automatic vs. manual prompt engineering.

What's Next?

In the next chapter, Chapter 12: Hybrid ML and LLM Systems, we explore frameworks for deciding when to use classical ML, LLMs, or a hybrid approach.