"I keep my weights close, but my gradients closer. Sharing is caring, as long as nobody sees the data."

A Farsighted Guard, Gradient-Hoarding AI Agent

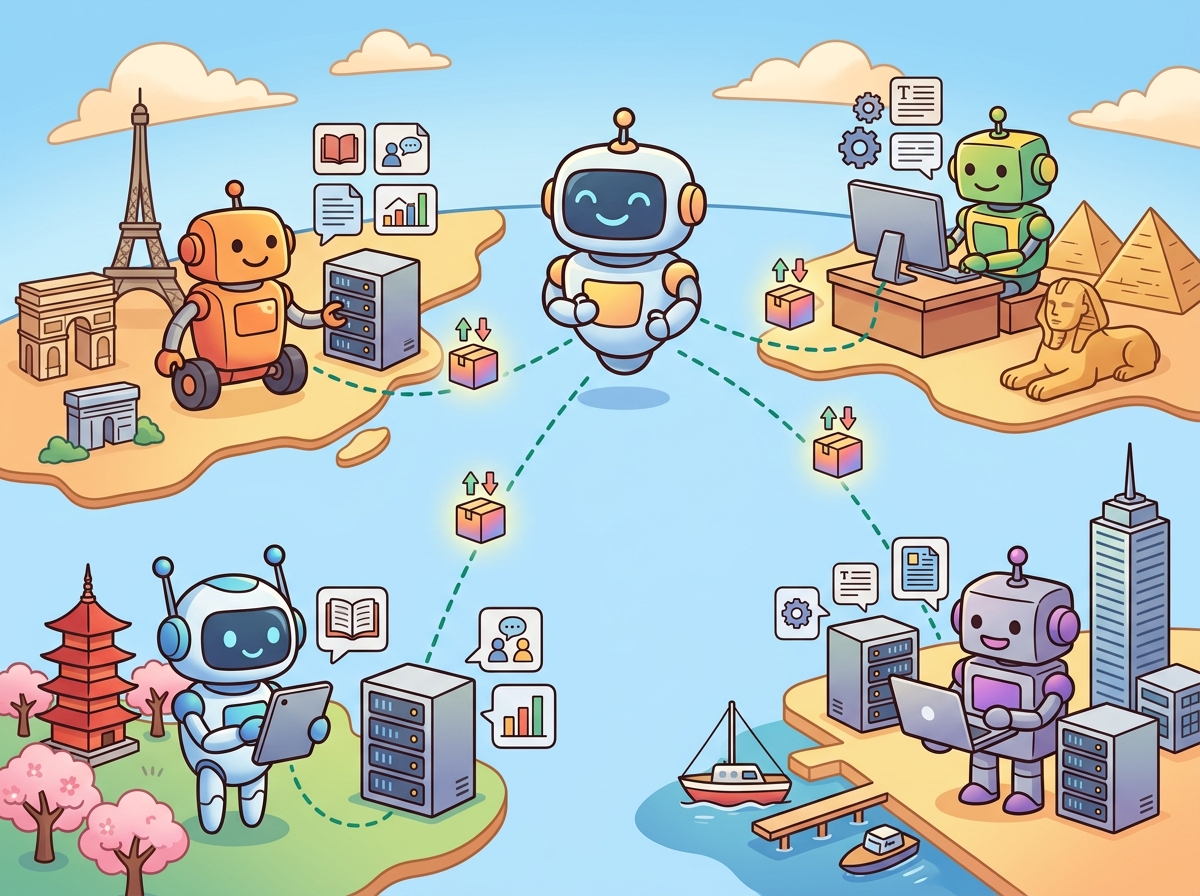

Federated learning (FL) enables multiple parties to collaboratively train or fine-tune a model without sharing their raw data. Each participant trains locally on their own data and shares only model updates (gradients or adapter weights) with a central server, which aggregates them into a global model. For LLMs, this addresses a fundamental tension: organizations want models that benefit from diverse, domain-rich data, but privacy regulations (Section 32.4), competitive concerns, and data sovereignty laws prevent them from pooling that data in one location.

Prerequisites

This section builds on the privacy and differential privacy concepts from Section 32.12 and the distributed training fundamentals from Appendix T. Familiarity with fine-tuning workflows and LoRA is assumed.

1. Federated Learning Fundamentals

In standard (centralized) training, all data is collected on a central server. Federated learning inverts this: the model travels to the data, not the other way around.

The FedAvg Algorithm

McMahan et al. (2017) introduced Federated Averaging (FedAvg), the foundational FL algorithm. Each communication round proceeds as follows:

- The server sends the current global model $w_t$ to a subset of $K$ clients

- Each client $k$ trains the model on its local data for $E$ local epochs, producing updated weights $w_{t+1}^k$

- Clients send their updates $\Delta w^k = w_{t+1}^k - w_t$ back to the server

- The server aggregates updates using a weighted average: $w_{t+1} = w_t + \frac{1}{K} \sum_{k=1}^{K} \Delta w^k$

The weighted average is typically proportional to each client's dataset size: clients with more data contribute proportionally more to the global update.

Google's Gboard keyboard uses federated learning to improve next-word prediction across billions of Android devices. Every time you type a message, your phone trains a tiny model update locally, encrypts it, and sends only the encrypted gradient to Google's servers. Google learns that people are starting to type "rizz" more often, but never sees any individual's messages. It is autocomplete training at planetary scale, powered by phones that are mostly sitting in pockets.

Key Challenges

- Non-IID data: Client datasets are typically not independent and identically distributed. A hospital in cardiology sees different conditions than one in pediatrics. This data heterogeneity causes client updates to diverge, slowing convergence.

- Communication cost: Transmitting full model gradients for a 7B parameter model requires ~28 GB per round (FP32). With hundreds of clients and many rounds, bandwidth becomes the bottleneck.

- Stragglers: Clients with slower hardware or limited connectivity delay each round. Asynchronous FL protocols mitigate this but introduce staleness in gradients.

- Privacy leakage: Even gradient updates can leak information about training data through gradient inversion attacks (Section 32.12). Differential privacy or secure aggregation is needed.

2. Federated Fine-Tuning of LLMs

Full federated pretraining of LLMs is prohibitively expensive: the communication cost of exchanging billions of parameters each round is impractical. Instead, the dominant approach is federated fine-tuning, where a pretrained base model is adapted to domain-specific data held by multiple parties.

Federated LoRA (FFA-LoRA)

LoRA adapters are a natural fit for federated learning because they are small. Instead of exchanging 7B parameters each round, clients exchange only the low-rank adapter matrices (typically 1-10M parameters), reducing communication cost by 100-1000x.

import copy

import torch

def federated_lora_round(global_adapter, client_datasets, base_model, config):

"""One round of federated LoRA fine-tuning."""

client_adapters = []

client_sizes = []

for dataset in client_datasets:

# Each client starts from the global adapter

local_adapter = copy.deepcopy(global_adapter)

local_model = apply_lora(base_model, local_adapter)

# Local training (E epochs on client data)

optimizer = torch.optim.AdamW(local_adapter.parameters(), lr=config.lr)

for epoch in range(config.local_epochs):

for batch in dataset:

loss = local_model(**batch).loss

loss.backward()

optimizer.step()

optimizer.zero_grad()

client_adapters.append(local_adapter)

client_sizes.append(len(dataset))

# Server aggregation: weighted average of adapter weights

total_size = sum(client_sizes)

new_adapter = copy.deepcopy(global_adapter)

with torch.no_grad():

for name, param in new_adapter.named_parameters():

weighted_sum = sum(

(size / total_size) * dict(adapter.named_parameters())[name]

for adapter, size in zip(client_adapters, client_sizes)

)

param.copy_(weighted_sum)

return new_adapter

# Training loop

global_adapter = initialize_lora(rank=16, target_modules=["q_proj", "v_proj"])

for round_num in range(config.num_rounds):

global_adapter = federated_lora_round(

global_adapter, client_datasets, base_model, config

)Heterogeneous Federated Fine-Tuning

In practice, FL clients vary in hardware capacity. Some may have A100 GPUs; others may have consumer-grade cards or even CPUs. Heterogeneous FL allows clients to use different adapter configurations (e.g., different LoRA ranks) based on their compute budget, with the server performing rank-adaptive aggregation.

3. Privacy-Preserving Techniques in Federated LLM Training

Federated learning alone does not guarantee privacy. Gradient updates can be reverse-engineered to reconstruct training data, especially for text. Additional privacy mechanisms are essential.

Differential Privacy with Federated Learning (DP-FL)

Combining differential privacy with FL adds formal privacy guarantees. Each client clips and adds noise to their gradients before sending them to the server:

- Gradient clipping: Bound the L2 norm of each client's update to a maximum value $C$, limiting any single example's influence

- Noise addition: Add calibrated Gaussian noise $\mathcal{N}(0, \sigma^2 C^2 I)$ to the clipped gradients

- Privacy accounting: Track the cumulative privacy budget $(\epsilon, \delta)$ across rounds using the moments accountant or Renyi DP

The privacy-utility tradeoff is more severe for LLMs than for smaller models: the high dimensionality means more noise is needed to achieve the same privacy guarantee, and LLMs are more sensitive to noisy gradients during fine-tuning.

Secure Aggregation

Secure aggregation protocols ensure the server can compute the aggregate update without seeing any individual client's contribution. This is achieved through cryptographic techniques (secret sharing, homomorphic encryption) that allow addition over encrypted values. Google's implementation in Gboard uses secure aggregation for federated next-word prediction on mobile devices.

4. Applications and Use Cases

| Domain | Use Case | Why Federated? |

|---|---|---|

| Healthcare | Clinical NLP across hospital networks | HIPAA prohibits sharing patient records; each hospital trains locally on its EHR data |

| Finance | Fraud detection language models across banks | Regulatory silos prevent data sharing; FL enables collaborative model improvement |

| Mobile / Edge | On-device keyboard prediction | User typing data is highly personal; Google's Gboard pioneered this approach |

| Legal | Contract analysis across law firms | Attorney-client privilege prevents data pooling; FL trains on distributed corpora |

| Multi-national | Global models trained across data sovereignty boundaries | GDPR, China's PIPL, and other regulations restrict cross-border data transfer |

5. Frameworks and Tools

- Flower (flwr): The most popular open-source FL framework. Framework-agnostic (supports PyTorch, TensorFlow, JAX), with built-in strategies for FedAvg, FedProx, and FedOpt. Provides simulation mode for development and deployment mode for production.

- NVIDIA FLARE: Enterprise FL framework with built-in privacy (DP, homomorphic encryption), designed for healthcare and regulated industries. Integrates with NVIDIA's NeMo for LLM fine-tuning.

- PySyft (OpenMined): Privacy-preserving ML framework supporting federated learning, differential privacy, and secure multi-party computation. Python-native with remote execution capabilities.

- FedML: Platform supporting federated training on heterogeneous devices (cloud, edge, mobile) with MLOps integration for monitoring and experiment tracking.

import flwr as fl

from transformers import AutoModelForCausalLM, AutoTokenizer

from peft import get_peft_model, LoraConfig

# Client: each participant runs this on their local data

class LLMClient(fl.client.NumPyClient):

def __init__(self, model_name, local_dataset):

self.tokenizer = AutoTokenizer.from_pretrained(model_name)

base = AutoModelForCausalLM.from_pretrained(model_name)

lora_config = LoraConfig(r=16, lora_alpha=32, target_modules=["q_proj", "v_proj"])

self.model = get_peft_model(base, lora_config)

self.dataset = local_dataset

def get_parameters(self, config):

"""Return only LoRA adapter parameters."""

return [

val.cpu().numpy()

for name, val in self.model.named_parameters()

if "lora" in name

]

def fit(self, parameters, config):

"""Train locally for E epochs, return updated adapter weights."""

self.set_parameters(parameters)

train_local(self.model, self.dataset, epochs=config.get("local_epochs", 3))

return self.get_parameters(config), len(self.dataset), {}

def set_parameters(self, parameters):

lora_params = [

(name, param) for name, param in self.model.named_parameters()

if "lora" in name

]

for (name, param), new_val in zip(lora_params, parameters):

param.data = torch.tensor(new_val).to(param.device)

# Server: aggregation strategy

strategy = fl.server.strategy.FedAvg(

min_fit_clients=3,

min_available_clients=5,

fraction_fit=0.5, # Sample 50% of available clients per round

)

# Launch federated training

fl.server.start_server(

server_address="0.0.0.0:8080",

config=fl.server.ServerConfig(num_rounds=20),

strategy=strategy,

)6. Challenges and Limitations

- Data heterogeneity (non-IID): The most persistent challenge. When client data distributions differ significantly, FedAvg can converge to a suboptimal model or diverge entirely. Algorithms like FedProx (adds a proximal term penalizing divergence from the global model) and SCAFFOLD (uses control variates to correct client drift) partially address this.

- Model poisoning: A malicious client can submit adversarial updates that degrade or backdoor the global model. Byzantine-robust aggregation methods (e.g., coordinate-wise median, trimmed mean) provide some defense.

- Evaluation without data access: The server cannot directly evaluate the global model on client data. Techniques include federated evaluation (clients evaluate locally and report metrics) and holdout validation sets.

- Convergence speed: FL typically requires many more communication rounds than centralized training to reach equivalent quality, especially with non-IID data and privacy constraints.

- Intellectual property concerns: Even without raw data sharing, the resulting model encodes knowledge from all participants. Ownership and usage rights of the federated model must be defined contractually.

Federated learning does not guarantee privacy by default. While raw data stays local, the model updates (gradients) shared during federated training can leak information about individual training examples through gradient inversion attacks. Federated learning must be combined with secure aggregation, differential privacy, or both to provide meaningful privacy guarantees. Treating federated learning as inherently private is a dangerous oversimplification.

- Federated learning enables collaborative LLM training across organizations without sharing raw data, preserving data sovereignty and privacy.

- Federated fine-tuning of LLMs uses parameter-efficient methods (LoRA adapters) to reduce the communication overhead of exchanging model updates.

- Privacy-preserving techniques (secure aggregation, differential privacy, homomorphic encryption) add formal guarantees on top of the federated learning framework.

- Key applications include healthcare (multi-hospital models), finance (cross-institution fraud detection), and edge devices (on-device personalization).

- Communication efficiency and data heterogeneity remain the primary challenges; adapter-based approaches and adaptive aggregation strategies help address both.

Federated instruction tuning and federated RLHF are active research areas. FedIT (Zhang et al., 2024) showed that federating the instruction-tuning stage, where multiple organizations contribute instruction-response pairs without sharing them, produces models competitive with centrally trained ones.

Combining FL with preference optimization (Chapter 17) enables collaborative alignment without sharing sensitive preference data.

Self-Check Questions

Why is federated LoRA more practical than federated full fine-tuning for LLMs?

Full fine-tuning requires exchanging all model parameters (e.g., 28 GB for a 7B model in FP32) each communication round, which is prohibitive for bandwidth. LoRA adapters are typically 1-10M parameters (30-300 MB), reducing communication cost by 100-1000x while achieving comparable fine-tuning quality. This makes FL practical even over internet connections rather than requiring data center interconnects.

What is the non-IID problem in federated learning, and why does it affect LLMs?

Non-IID (non-independent and identically distributed) data means client datasets have different distributions: a medical institution sees clinical text, a legal firm sees contracts, a bank sees financial reports. When clients optimize on very different data distributions, their gradient updates point in different directions, causing the averaged global model to converge slowly or to a suboptimal point. For LLMs, this is especially acute because language varies enormously across domains, registers, and vocabularies.

Why is federated learning alone insufficient for privacy, and what additional mechanisms are needed?

Gradient updates can leak training data through gradient inversion attacks: an adversary can reconstruct training text from observed gradients. Additional mechanisms needed include differential privacy (adding noise to gradients before sharing), secure aggregation (cryptographic protocols that prevent the server from seeing individual updates), and gradient compression (reducing the information content of updates). These add privacy guarantees but increase computational cost and reduce model quality.

What Comes Next

This concludes Chapter 32 on safety, ethics, and regulation. In Chapter 33, we shift from protecting AI systems to deploying them strategically: building business cases, measuring ROI, and making build-vs-buy decisions that align technical capabilities with organizational goals.

McMahan, B. et al. (2017). "Communication-Efficient Learning of Deep Networks from Decentralized Data." AISTATS.

The foundational paper introducing Federated Averaging (FedAvg). Demonstrated that averaging model updates from distributed clients can achieve competitive performance while keeping data local. The standard starting point for all FL research.

Zhang, J. et al. (2024). "Towards Building the Federatedgpt: Federated Instruction Tuning." ICASSP.

Proposes FedIT, a framework for federated instruction tuning of LLMs. Shows that instruction tuning can be effectively distributed across organizations while maintaining model quality competitive with centralized training.

Kuang, W. et al. (2024). "FedML-LLM: Build Your Own Large Language Models on Proprietary Data using the FedML Platform."

Practical guide to federated LLM training using the FedML platform, covering LoRA integration, privacy mechanisms, and heterogeneous device support.

Beutel, D. et al. (2020). "Flower: A Friendly Federated Learning Framework."

Introduces the Flower framework for federated learning. Framework-agnostic design supports PyTorch, TensorFlow, and JAX with minimal code changes. The most widely used open-source FL framework.

Li, T. et al. (2020). "Federated Optimization in Heterogeneous Networks." MLSys.

Introduces FedProx, which adds a proximal term to the client objective to handle data heterogeneity (non-IID distributions). A key algorithm for practical federated learning with diverse clients.