"They said emergent abilities were like magic. Turns out, most magic is just a measurement trick with better lighting."

Frontier, Illusion Dispelling AI Agent

The question of whether large language models exhibit genuinely "emergent" abilities has profound implications for AI safety, capability forecasting, and resource allocation. If capabilities appear suddenly and unpredictably at scale, then we cannot reliably anticipate what the next generation of models will be able to do. If emergence is a measurement artifact, then capabilities are more predictable than they appear, and the field can plan accordingly. This debate sits at the intersection of empirical ML research, philosophy of science, and practical engineering decisions about when to trust (or distrust) scaling curves.

Prerequisites

This section builds on scaling laws and emergent abilities from Section 06.3: Scaling Laws and evaluation methodology from Section 29.1: Evaluation Fundamentals. Familiarity with basic probability and the concept of phase transitions is helpful but not required.

1. The Original Emergence Claim

In 2022, Jason Wei and colleagues at Google published a landmark paper cataloging abilities that appeared to emerge suddenly as language models scaled up. The paper, "Emergent Abilities of Large Language Models," documented over 130 tasks where performance was essentially at chance for smaller models but jumped sharply once models exceeded a certain size threshold (Wei et al., 2022).

The examples were striking. Three-digit addition: models with fewer than approximately 10 billion parameters scored near zero, while models above that threshold achieved reasonable accuracy. Chain-of-thought reasoning: small models produced incoherent intermediate steps, but models beyond a critical scale suddenly generated logical reasoning chains. Few-shot translation between rare language pairs, analogical reasoning, and multi-step arithmetic all seemed to "turn on" at specific scale thresholds.

The authors defined emergence carefully: "an ability is emergent if it is not present in smaller models but is present in larger models." The key claim was not merely that bigger models perform better (that is expected from scaling laws), but that certain abilities appeared discontinuously, with near-zero performance suddenly jumping to meaningful accuracy. This pattern could not be predicted by extrapolating from smaller models.

Why Emergence Mattered

The emergence narrative had immediate consequences for the field. If capabilities truly appear unpredictably, then:

- Safety planning becomes harder. You cannot anticipate which dangerous capabilities (persuasion, deception, autonomous planning) might emerge at the next scale increment.

- Investment decisions become speculative. Labs spend billions on training runs partly on the hope that new emergent abilities will appear, but there is no way to guarantee the investment will produce qualitatively new capabilities.

- Evaluation is insufficient. If you test a capability at one scale and find it absent, you cannot conclude it will remain absent at 10x the scale.

- Theoretical understanding is incomplete. The scaling laws from Section 06.3 predict smooth loss curves, so emergent abilities suggest that loss is a poor proxy for task-level capabilities.

The emergence framing dominated AI discourse through 2023. Media coverage amplified the narrative, and it became common to hear claims that AI models were developing "unexpected" capabilities at each new scale frontier.

If you plot enough benchmarks with binary scoring, even a goldfish's swimming ability would look "emergent" past a certain tank size. The data was always there; the ruler just had too few tick marks.

2. The Mirage Hypothesis

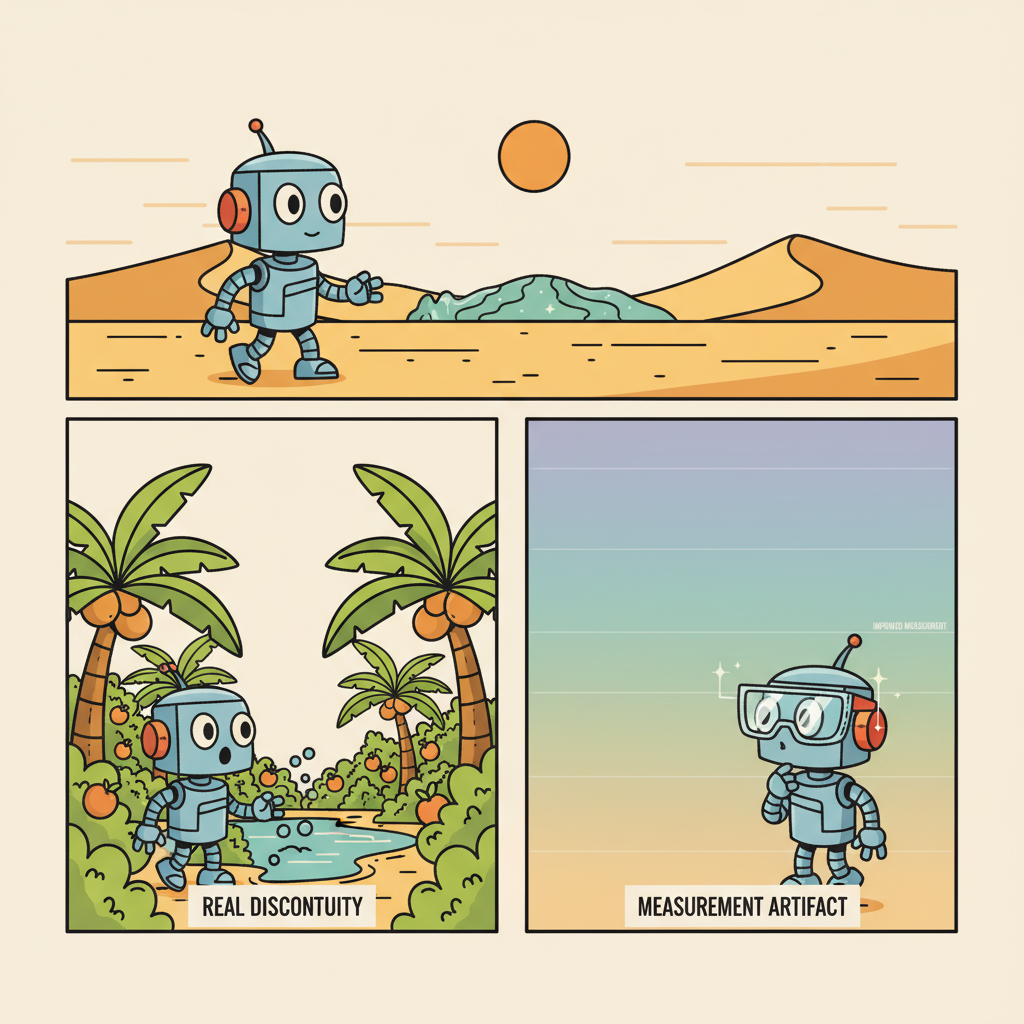

In late 2023, Rylan Schaeffer, Brando Miranda, and Sanmi Koyejo published "Are Emergent Abilities of Large Language Models a Mirage?" at NeurIPS, presenting a sharply contrasting interpretation of the same data. Their central argument: the apparent discontinuity in emergent abilities is an artifact of the metrics used to measure them, not a property of the models themselves.

The Metric Choice Argument

The Schaeffer et al. critique rests on a careful distinction between the model's internal capabilities and the metric used to measure those capabilities. Consider three-digit addition. If you score the task as "exact match" (the entire answer must be correct), then a model that gets two of three digits right scores zero. As the model improves smoothly, its probability of getting all three digits right transitions from near-zero to high in a seemingly sharp jump. But this sharpness is a property of the metric, not the model.

The authors demonstrated this with a simple mathematical argument. If a model's per-token accuracy improves smoothly with scale (as predicted by scaling laws), then any metric that requires multiple tokens to all be correct will exhibit a sharp, nonlinear transition. This is because the probability of a conjunction (all tokens correct) rises as a steep sigmoid even when each individual probability rises linearly.

To test this hypothesis empirically, the authors re-evaluated the same tasks using continuous metrics (such as token-level accuracy, Brier score, or partial credit). When they did so, the sharp transitions largely disappeared. Performance improved smoothly and predictably with scale.

The Researcher Degrees of Freedom Problem

A secondary argument concerned selection bias. With hundreds of benchmarks available, researchers naturally highlight the ones that show interesting patterns. Tasks that improve smoothly with scale are unremarkable and less likely to be reported. Tasks that appear to jump are noteworthy and get published. This creates a systematic bias toward reporting emergence even if the underlying population of tasks is mostly smooth.

Schaeffer et al. also noted that the number of scale points in the original Wei et al. analysis was small (often only 4 to 6 model sizes). With so few data points, it is difficult to distinguish a true discontinuity from a smooth curve that merely appears sharp due to sparse sampling.

3. Resolution Attempts: Where the Debate Stands

The emergence debate has not been cleanly resolved. Instead, the field has converged on a more nuanced position that acknowledges valid points on both sides.

Smooth Capabilities, Sharp Tasks

The emerging consensus (no pun intended) distinguishes between two claims:

- Model capabilities improve smoothly with scale. Internal representations, per-token predictions, and continuous metrics all show gradual improvement. This is consistent with scaling laws and with the Schaeffer et al. critique.

- Task-level performance can exhibit sharp transitions. When tasks have threshold structure (requiring multiple sub-capabilities to all be present simultaneously), the transition from "cannot do" to "can do" can be genuinely sharp in practice, even if the underlying capabilities improved smoothly.

This distinction matters because practitioners care about task-level performance. A customer service chatbot that gets 2 out of 3 digits right in an order number is useless. A coding assistant that writes syntactically valid but logically incorrect code is worse than one that refuses to answer. In practical terms, threshold effects are real even if the underlying mechanism is smooth.

Phase Transitions in Complex Systems

Several research groups have drawn analogies to phase transitions in physics and statistical mechanics. Water does not gradually become ice; it undergoes a sharp transition at a critical temperature, even though the molecular dynamics change smoothly. Similarly, a neural network's ability to perform multi-step reasoning may have a critical threshold: once the model's per-step accuracy exceeds a certain value, the probability of completing a multi-step chain crosses from negligible to substantial.

Arora and Goyal (2023) formalized this intuition, showing that for compositional tasks (where success requires chaining multiple operations), a smooth improvement in per-operation accuracy produces a phase transition in task accuracy. The location of this phase transition is predictable in principle, but in practice depends on knowing the task's compositional structure, which is often unclear in advance.

The "Jagged Frontier" of Capabilities

Research by Ethan Mollick and colleagues at Wharton introduced the useful metaphor of a "jagged frontier" to describe LLM capabilities (Dell'Acqua et al., 2023). Rather than a smooth boundary between what a model can and cannot do, the capability boundary is irregular: a model might excel at tasks that seem hard (writing legal briefs) while failing at tasks that seem easy (counting letters in a word).

This jaggedness creates practical challenges for deployment. You cannot assume that because a model handles complex financial analysis, it will also handle simple date arithmetic correctly. Each capability must be evaluated independently, and the results at one scale do not smoothly extrapolate to predict the capability profile at the next scale.

The jagged frontier concept aligns with both sides of the emergence debate. The frontier is jagged partly because of metric effects (some tasks have threshold structure) and partly because of genuine capability gaps (some skills require specific training data that may be absent or underrepresented).

4. What This Means for Practitioners

Regardless of how the emergence debate resolves theoretically, practitioners need actionable guidance. Here are the key takeaways:

Do Not Trust Scaling Extrapolations Blindly

Even if capabilities improve smoothly at the token level, task-level performance can surprise you. A model that fails at a task today may succeed tomorrow with a small scale increase, or it may not. The safest approach is to evaluate each critical capability empirically at the target scale rather than extrapolating from smaller models.

Use Continuous Metrics Alongside Discrete Ones

If you are evaluating a model and see zero performance on a task, switch to a continuous metric before concluding that the model lacks the capability entirely. Token-level accuracy, partial credit scoring, and calibration metrics can reveal latent capability that discrete metrics miss. This connects directly to the evaluation frameworks in Section 29.1.

Plan for the Jagged Frontier

When deploying LLM systems, identify the specific capabilities your application requires and test each one independently. Do not assume that general benchmark performance predicts task-specific performance. Build guardrails for the tasks where the model is likely to fail, even if it excels at superficially similar tasks.

Beware of Emergence Hype

When a vendor or research lab claims that their new model has "emergent" capabilities, ask: What metric was used? How many scale points were evaluated? Does the capability persist under continuous metrics? Is there a plausible mechanistic explanation? Critical evaluation of emergence claims is essential for sound investment and deployment decisions.

Think of emergence like an image that appears blurry at low resolution. At 100x100 pixels, you cannot identify the object. At 200x200, you still cannot. At 400x400, suddenly you recognize a face. The face was always "there" in the underlying data; it was your measurement resolution (pixel count) that created the apparent discontinuity. Similarly, many "emergent" abilities may be smooth improvements that only become useful (and thus visible) once they cross a practical threshold. The question is whether all emergent abilities follow this pattern, or whether some represent genuinely novel computations that smaller models cannot perform in any form.

Scaling laws might have fundamental limits, and the limits are different for different capabilities. The power-law relationships between compute and loss (from Section 06.2) are empirical observations, not physical laws. They describe what happened in the past, not what will happen at scales we have not yet reached. Several potential limits are approaching simultaneously: the data wall (we are running out of high-quality internet text), the energy wall (frontier training runs consume the power output of small cities), and the diminishing returns wall (each doubling of compute yields smaller absolute gains). The test-time compute scaling axis offers a partial escape route, but it introduces per-query costs that change the economics entirely, as explored in Section 34.2.

5. Open Questions

Several questions remain genuinely open as of early 2026:

- Are there capabilities with no smooth precursor? The Schaeffer et al. critique explains many cases, but it is unclear whether all emergent abilities reduce to metric effects. Some researchers argue that certain reasoning capabilities (e.g., self-correction, strategic planning) may genuinely require a minimum model complexity with no smooth analog at smaller scales.

- Can we predict phase transitions? If we knew the compositional structure of a task, we could predict where the threshold would occur. But for most real-world tasks, the compositional structure is unknown. Developing methods to predict capability thresholds from task structure is an active research direction.

- Does emergence depend on architecture? Most emergence studies focus on dense transformer models. It is unclear whether the same patterns hold for mixture-of-experts architectures, state-space models, or other alternatives discussed in Section 34.2.

- What role does training data play? Some apparent emergent abilities may reflect the distribution of training data rather than model scale per se. A model might "suddenly" acquire a capability because the relevant training data only becomes well-represented above a certain dataset size, not because the model's architecture crossed a threshold.

The weight of evidence favors the "smooth capabilities, sharp metrics" interpretation for the majority of documented emergent abilities. The Schaeffer et al. critique is methodologically sound, and the metric-choice explanation is both parsimonious and predictive. However, many researchers argue it would be premature to conclude that all emergence is a mirage. The compositional phase transition argument (Arora and Goyal, 2023) suggests that genuine threshold effects can arise from smooth improvements, and these thresholds are real in practice even if they are predictable in theory. For practitioners, the safest position is to treat emergence as a measurement and evaluation challenge rather than a mystical property of scale. Investing in better evaluation leads to fewer surprises about what models can and cannot do.

Exercises

Consider a task that requires a model to correctly generate a 5-token answer. Suppose the model's per-token accuracy improves linearly with log(parameters), from 60% at 1B parameters to 95% at 100B parameters.

- Calculate the exact-match accuracy (all 5 tokens correct) at 1B, 10B, and 100B parameters, assuming token accuracies of 60%, 80%, and 95% respectively.

- Plot or sketch the exact-match accuracy curve. Does it look "emergent"?

- Now calculate the partial credit score (fraction of tokens correct) at each scale. Does this look emergent?

- What does this tell you about the choice of evaluation metrics in capability assessments?

Show Answer

1. Exact-match accuracy: At 1B: 0.60^5 = 0.0778 (7.8%). At 10B: 0.80^5 = 0.3277 (32.8%). At 100B: 0.95^5 = 0.7738 (77.4%).

2. The exact-match curve rises from ~8% to ~77% across two orders of magnitude of scale. On a plot with few data points, this could appear as a sharp jump, especially if the 1B data point is rounded to "near zero."

3. Partial credit: At 1B: 60%. At 10B: 80%. At 100B: 95%. This is a smooth, gradual improvement with no suggestion of emergence.

4. The choice of metric fundamentally shapes whether a capability appears emergent. Exact-match metrics amplify threshold effects because they require conjunction of multiple correct sub-outputs. Continuous metrics reveal the smooth underlying improvement. When evaluating models, always report both types of metrics to avoid misleading conclusions.

A research lab publishes a paper claiming that their new 500B parameter model exhibits "emergent planning ability" that was absent in their 100B model. The evidence is that the 500B model can solve multi-step planning puzzles (evaluated with exact-match scoring) at 45% accuracy, while the 100B model scored 2%. Only two model sizes were tested. No continuous metrics were reported.

- List at least three alternative explanations for this result that do not require genuine emergence.

- What additional experiments would you request before accepting the emergence claim?

- How would you design an evaluation protocol that could distinguish genuine emergence from metric artifacts?

Show Answer

1. Alternative explanations: (a) Metric effect: multi-step planning requires all steps to be correct, so exact-match scoring amplifies a smooth per-step improvement into a sharp transition. (b) Training data effect: the larger model may have been trained on more planning-related data (e.g., code, mathematical proofs), not just more data in general. (c) Sparse sampling: with only two data points, it is impossible to distinguish a step function from a smooth sigmoid. Intermediate model sizes might show gradual improvement. (d) Prompt sensitivity: the larger model may simply be better at following the evaluation prompt format, not at planning per se.

2. Additional experiments: (a) Evaluate at least 4 to 6 intermediate model sizes. (b) Use continuous metrics (per-step accuracy, partial credit). (c) Probe the 100B model with different prompting strategies (chain-of-thought, decomposed steps) to see if latent capability exists. (d) Analyze what the 100B model gets wrong: are its errors random or systematic? (e) Test on simpler planning tasks with fewer steps to see if the capability gradient is smooth.

3. Protocol design: use a suite of tasks with varying compositional depth (1-step, 2-step, ... N-step). Report both exact-match and continuous metrics at each depth. Evaluate at a minimum of 5 model scales. If emergence is genuine, there should be a task-depth threshold that shifts with scale. If it is a metric artifact, continuous metrics should show smooth improvement at all depths.

You are deploying a customer-facing LLM application that needs to (a) summarize legal documents, (b) extract dates and monetary amounts, (c) answer yes/no compliance questions, and (d) count the number of clauses in a contract. Based on the "jagged frontier" concept, which of these tasks would you expect to be most unreliable, and why? Design a testing strategy that accounts for the jagged frontier.

Show Answer

Task (d), counting clauses, is likely the most unreliable despite appearing simplest. LLMs notoriously struggle with counting and enumeration tasks, even when they excel at much more complex reasoning. This is a classic example of the jagged frontier: the model can summarize a 50-page document accurately but cannot count to 12 reliably.

Task (b), extracting dates and amounts, is also at risk because it requires precise character-level accuracy (getting "$1,234,567.89" exactly right), which is a conjunction task subject to metric effects.

Testing strategy: (1) Create a test suite for each task independently, not just overall benchmarks. (2) For counting tasks, compare against simple programmatic baselines (regex, rule-based parsers) and use the LLM only where it outperforms them. (3) For extraction tasks, use both exact-match and fuzzy-match metrics. (4) For each task, test with adversarial examples designed to probe the jagged frontier (e.g., documents with unusual formatting, ambiguous dates, nested clauses). (5) Implement task-specific guardrails: use a programmatic counter for clause counting, LLM for summarization.

Build your prototype and evaluation pipeline using a well-understood model. Once you have a working system with clear metrics, swap in newer architectures and compare. This way, the novelty of the architecture does not mask pipeline bugs.

What Comes Next

In the next section, Section 34.2: Scaling Frontiers, we examine the data wall, compute economics, and architectural alternatives that will shape the next generation of frontier models.

The paper that catalyzed the emergence debate, documenting abilities that appear abruptly as models scale. Essential context for understanding the claims and counter-claims explored throughout this section.

Argues that emergent abilities are artifacts of metric choice rather than fundamental phase transitions. This influential rebuttal reshaped how the field interprets scaling curves.

Proposes a formal theoretical framework explaining how complex skills emerge from the combination of simpler sub-skills during scaling. Provides mathematical grounding for the intuitions discussed in this section.

Introduces the BIG-Bench benchmark suite covering 204 tasks, providing the empirical foundation on which many emergence claims rest. Useful for understanding how emergence is measured in practice.

Investigates whether apparent emergence can be explained by improvements in in-context learning rather than truly novel capabilities. Offers a more nuanced view of what "emergence" means operationally.

Demonstrates that model performance can be predicted across scales using observational data alone, without expensive training runs. Directly relevant to the practical question of forecasting model capabilities.

A rigorous field experiment with BCG consultants showing that AI capabilities have a "jagged" boundary: strong on some tasks and surprisingly weak on others. Grounds the abstract emergence discussion in observable workplace outcomes.