"The quality of your RLHF model is bounded by the quality of your annotations, not the quality of your algorithm."

Label, Annotation-Obsessed AI Agent

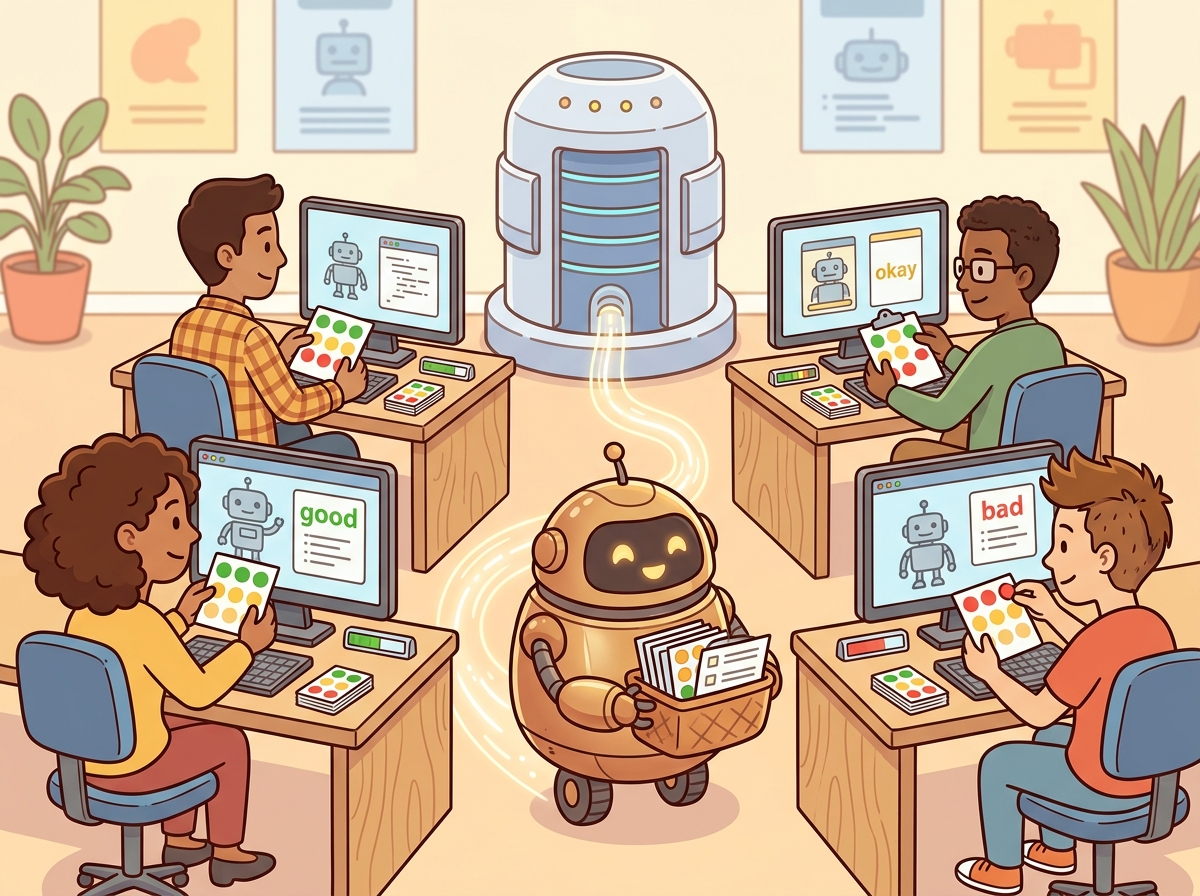

Human feedback is the bridge between model capability and model alignment. No matter how sophisticated your evaluation harness or how clever your automated metrics, there are dimensions of language model quality (helpfulness, safety, stylistic preference, factual nuance) that ultimately require human judgment. The practical challenge is collecting that judgment at scale, with consistency, and at reasonable cost. Three platforms have emerged as the dominant tools for this work: Label Studio, an open-source annotation platform with flexible task configuration; Argilla, a dataset curation tool designed for LLM feedback workflows with deep Hugging Face integration; and LangSmith, a tracing and evaluation platform that connects production observability to human-in-the-loop evaluation. This section examines each tool, then shows how to integrate them into RLHF training pipelines and design annotation workflows that produce reliable preference data.

Prerequisites

Before starting, make sure you are familiar with evaluation fundamentals from Section 29.1: LLM Evaluation Fundamentals, arena-style evaluation from Section 29.8: Arena-Style and Crowdsourced Evaluation, and LLM-as-Judge methods from Section 29.10: LLM-as-Judge.

1. Label Studio: Open-Source Annotation Platform

Inter-annotator agreement on LLM preference ranking typically hovers around 65 to 75%, meaning that even trained humans disagree on which response is "better" roughly a third of the time. This ceiling is not a failure of the annotation process; it reflects genuine ambiguity in language quality. Your RLHF model is learning from inherently noisy signal, which is why collecting more annotations per example matters more than finding "perfect" annotators.

Label Studio is a general-purpose, open-source data labeling platform that supports text, image, audio, video, and time-series annotation. For LLM work, its key strengths are its template-based labeling interface, its pre-annotation capabilities (where model predictions are loaded as initial labels for human review), and its active learning integration. Label Studio can be self-hosted, which matters for organizations that cannot send sensitive data to third-party services.

The labeling interface is configured using an XML-based template language. Each template defines the layout of the annotation screen: what data fields to display, what input controls to offer, and what output schema to produce. For LLM preference ranking, the typical setup displays two or more model responses side by side and asks annotators to rank them or select the better one. Code Fragment 29.12.2 shows a complete setup for a preference ranking project.

# Code Fragment 29.12.2: Label Studio preference ranking project setup

# Install: pip install label-studio label-studio-sdk

from label_studio_sdk import Client

# Connect to a running Label Studio instance

ls_client = Client(url="http://localhost:8080", api_key="your-api-key")

# XML template for pairwise preference ranking of LLM responses

preference_template = """

<View>

<Style>

.prompt-box { background: #f0f4f8; padding: 12px; border-radius: 8px; }

.response-pair { display: flex; gap: 16px; margin-top: 12px; }

.response-card { flex: 1; padding: 12px; border: 1px solid #ddd; border-radius: 8px; }

</Style>

<View className="prompt-box">

<Header value="User Prompt" />

<Text name="prompt" value="$prompt" />

</View>

<View className="response-pair">

<View className="response-card">

<Header value="Response A" />

<Text name="response_a" value="$response_a" />

</View>

<View className="response-card">

<Header value="Response B" />

<Text name="response_b" value="$response_b" />

</View>

</View>

<Header value="Which response is better?" />

<Choices name="preference" toName="prompt" choice="single-radio">

<Choice value="response_a_much_better" />

<Choice value="response_a_slightly_better" />

<Choice value="tie" />

<Choice value="response_b_slightly_better" />

<Choice value="response_b_much_better" />

</Choices>

<Header value="Rate on specific dimensions (optional)" />

<Rating name="helpfulness" toName="prompt" maxRating="5" />

<Rating name="safety" toName="prompt" maxRating="5" />

<TextArea name="rationale" toName="prompt"

placeholder="Briefly explain your preference..."

rows="3" />

</View>

"""

# Create the project

project = ls_client.start_project(

title="LLM Preference Ranking v2",

label_config=preference_template,

description="Pairwise comparison of model responses for RLHF training",

)

# Import tasks with pre-generated response pairs

tasks = [

{

"data": {

"prompt": "Explain quantum entanglement to a high school student.",

"response_a": "Quantum entanglement is when two particles...",

"response_b": "Imagine you have two magic coins...",

},

"predictions": [{

"model_version": "gpt-4o-preliminary",

"result": [{"from_name": "preference", "to_name": "prompt",

"type": "choices", "value": {"choices": ["response_b_slightly_better"]}}],

"score": 0.73, # Model confidence for pre-annotation

}],

},

# ... additional tasks

]

project.import_tasks(tasks)

print(f"Created project {project.id} with {len(tasks)} tasks")

Pre-annotation accelerates labeling without replacing it. The predictions field in Label Studio tasks loads model-generated labels as defaults that annotators can confirm or override. This typically reduces annotation time by 30 to 50 percent for straightforward cases, while preserving human judgment for ambiguous examples. The key is to track the override rate: if annotators are changing fewer than 10% of pre-annotations, the task may be too easy for human review; if they change more than 60%, the pre-annotations are adding noise rather than saving time.

Label Studio's active learning integration works through its machine learning backend API. You register a model endpoint that receives completed annotations and returns updated predictions for unlabeled samples. The platform then prioritizes samples where the model is least confident, directing human attention to the examples that will most improve the training data. This is particularly valuable for preference annotation, where many pairs have obvious winners and only a fraction of comparisons require careful human judgment.

2. Argilla: Dataset Curation for LLMs

Argilla is an open-source platform built specifically for the LLM feedback and dataset curation workflow. Unlike Label Studio, which is a general-purpose annotation tool adapted for NLP, Argilla was designed from the ground up for language model tasks: feedback collection, preference annotation, instruction tuning data curation, and quality filtering. Its deepest integration point is with the Hugging Face ecosystem; Argilla datasets can be pushed to and pulled from the Hugging Face Hub, making it straightforward to share curated training data.

Argilla organizes work around datasets that contain records with fields (the data to display), questions (what annotators answer), and metadata (for filtering and quality analysis). The platform supports multi-annotator workflows with built-in agreement tracking, and it provides a web UI where annotators can browse, filter, and label records. Code Fragment 29.12.2 demonstrates creating a feedback dataset and collecting annotations.

# Code Fragment 29.12.2: Argilla dataset curation with feedback collection

# Install: pip install argilla

import argilla as rg

# Connect to Argilla server (or Hugging Face Spaces deployment)

client = rg.Argilla(api_url="http://localhost:6900", api_key="argilla.apikey")

# Define the annotation schema for preference feedback

settings = rg.Settings(

fields=[

rg.TextField(name="instruction", title="User Instruction"),

rg.TextField(name="response_a", title="Response A", use_markdown=True),

rg.TextField(name="response_b", title="Response B", use_markdown=True),

],

questions=[

rg.LabelQuestion(

name="preference",

title="Which response is better?",

labels={

"a_much_better": "A is much better",

"a_slightly_better": "A is slightly better",

"tie": "About the same",

"b_slightly_better": "B is slightly better",

"b_much_better": "B is much better",

},

),

rg.RatingQuestion(

name="helpfulness",

title="Rate the helpfulness of the chosen response",

values=[1, 2, 3, 4, 5],

),

rg.TextQuestion(

name="rationale",

title="Why did you choose this response?",

required=False,

),

],

metadata=[

rg.TermsMetadataProperty(name="model_a", title="Model A"),

rg.TermsMetadataProperty(name="model_b", title="Model B"),

rg.FloatMetadataProperty(name="prompt_length", title="Prompt Length"),

],

guidelines=(

"Compare both responses to the user instruction. Choose the response "

"that is more helpful, accurate, and safe. When responses are close in "

"quality, prefer the one that is more concise and direct."

),

)

# Create the dataset

dataset = rg.Dataset(name="preference-pairs-v3", settings=settings, workspace="default")

dataset.create()

# Add records from a Hugging Face dataset or custom source

records = [

rg.Record(

fields={

"instruction": "Write a haiku about machine learning.",

"response_a": "Data flows like streams\nWeights adjust in silence deep\nPatterns start to speak",

"response_b": "Neurons fire and learn\nGradient descent finds the way\nLoss function drops low",

},

metadata={"model_a": "llama-3.1-8b", "model_b": "mistral-7b", "prompt_length": 42.0},

),

# ... additional records

]

dataset.records.log(records)

# After annotation, export to Hugging Face datasets format

annotated = dataset.records.to_list()

print(f"Collected {len(annotated)} annotated records")

# Push curated dataset to Hugging Face Hub

dataset.records.to_datasets().push_to_hub("my-org/preference-pairs-v3")

Argilla's annotation quality metrics are accessible through its Python SDK and web interface. The platform computes inter-annotator agreement automatically when multiple annotators label the same record, and it surfaces disagreement patterns through filtering and sorting. You can identify records where annotators disagree, examine those cases for guideline ambiguity, and use the disagreement data to refine your annotation protocol.

Combine Argilla with weak supervision for efficient scaling. Argilla supports a hybrid workflow where a subset of records receives full human annotation, and the remaining records are labeled using programmatic labeling functions (weak supervision). You write labeling functions that encode heuristics (for example, "if the response contains a refusal phrase, label it as less helpful"), apply them to the full dataset, then use the human-annotated subset to calibrate and weight the labeling functions. This approach can reduce annotation costs by 5x to 10x while maintaining 85 to 90 percent of the quality of full human annotation. See the Snorkel and Flyingsquid papers in the bibliography for the theoretical foundations.

3. LangSmith: Tracing and Human-in-the-Loop Evaluation

LangSmith, developed by LangChain, occupies a different niche from Label Studio and Argilla. Rather than starting from a blank annotation project, LangSmith connects to your production LLM application, captures execution traces (every LLM call, retrieval step, tool invocation, and chain execution), and then lets you route those traces into evaluation workflows. This production-first approach means your evaluation data comes from real user interactions, not synthetic benchmarks.

The core workflow in LangSmith has three stages. First, tracing captures detailed logs of every LLM interaction in your application. Second, dataset creation lets you select traces and convert them into evaluation datasets, optionally with human annotations. Third, evaluation experiments run your application (or a new version of it) against those datasets and compare results. Code Fragment 29.12.5 shows the complete pipeline from tracing through human evaluation.

# Code Fragment 29.12.5: LangSmith trace evaluation pipeline

# Install: pip install langsmith langchain-openai

import os

from langsmith import Client

from langsmith.evaluation import evaluate

from langsmith.schemas import Example, Run

os.environ["LANGCHAIN_TRACING_V2"] = "true"

os.environ["LANGCHAIN_API_KEY"] = "your-langsmith-api-key"

client = Client()

# Step 1: Create an evaluation dataset from production traces

# (Traces are collected automatically when tracing is enabled)

dataset = client.create_dataset(

dataset_name="customer-support-eval-v2",

description="Curated customer support interactions for evaluation",

)

# Add examples (typically selected from production traces)

examples = [

{

"inputs": {"question": "How do I reset my password?"},

"outputs": {"answer": "Go to Settings > Security > Reset Password..."},

"metadata": {"category": "account", "difficulty": "easy"},

},

{

"inputs": {"question": "Why was I charged twice for my subscription?"},

"outputs": {"answer": "I can see the duplicate charge. Let me process a refund..."},

"metadata": {"category": "billing", "difficulty": "medium"},

},

]

for ex in examples:

client.create_example(

inputs=ex["inputs"],

outputs=ex["outputs"],

metadata=ex["metadata"],

dataset_id=dataset.id,

)

# Step 2: Define custom evaluators for human-in-the-loop assessment

def correctness_evaluator(run: Run, example: Example) -> dict:

"""Automated correctness check as a first pass."""

prediction = run.outputs.get("answer", "")

reference = example.outputs.get("answer", "")

# Simple overlap heuristic; real implementations use LLM-as-judge

overlap = len(set(prediction.split()) & set(reference.split()))

total = max(len(set(reference.split())), 1)

score = overlap / total

return {"key": "correctness", "score": score}

def helpfulness_evaluator(run: Run, example: Example) -> dict:

"""Flag examples needing human review based on confidence."""

prediction = run.outputs.get("answer", "")

# Low-confidence heuristic: short responses or hedging language

hedging = any(w in prediction.lower() for w in ["maybe", "i think", "not sure", "possibly"])

short = len(prediction.split()) < 15

needs_review = hedging or short

return {

"key": "helpfulness_auto",

"score": 0.5 if needs_review else 0.8,

"comment": "Flagged for human review" if needs_review else "Auto-passed",

}

# Step 3: Run the target application against the evaluation dataset

def my_support_bot(inputs: dict) -> dict:

"""The application under evaluation."""

from langchain_openai import ChatOpenAI

llm = ChatOpenAI(model="gpt-4o-mini", temperature=0)

response = llm.invoke(inputs["question"])

return {"answer": response.content}

# Execute evaluation experiment

results = evaluate(

my_support_bot,

data="customer-support-eval-v2",

evaluators=[correctness_evaluator, helpfulness_evaluator],

experiment_prefix="support-bot-v2.1",

max_concurrency=4,

)

# Step 4: Create annotation queue for human review of flagged examples

# (Done through LangSmith UI: select runs with low auto-scores,

# add to annotation queue, assign to reviewers)

print(f"Evaluation complete: {results.experiment_name}")

print("Review flagged examples in the LangSmith annotation queue UI.")

Production traces are the most valuable evaluation data. Synthetic benchmarks test what you think users will ask. Production traces reveal what users actually ask. LangSmith's annotation queue workflow lets you systematically sample production traces, route them to human reviewers, and build evaluation datasets that reflect your real distribution of user queries. The most effective teams maintain a continuous feedback loop: production traces feed annotation queues, annotations feed evaluation datasets, evaluation results guide model and prompt improvements, and improved models serve production traffic that generates new traces.

LangSmith's comparison experiments feature is particularly valuable for A/B testing prompt or model changes. You run two versions of your application against the same evaluation dataset, then view side-by-side results in the LangSmith UI. Human reviewers can annotate which version is better for each example, producing preference data that directly informs deployment decisions. This connects the feedback tooling story to the production deployment practices covered in later chapters of this part.

4. RLHF Pipeline Integration

Collecting preference annotations is only valuable if you can feed them into a training pipeline. The standard format for RLHF preference data consists of chosen/rejected pairs: for each prompt, the annotator-preferred response is "chosen" and the other is "rejected." The reward model (or direct preference optimization loss) learns from the contrast between these pairs. The quality of this data, specifically the consistency and calibration of the preference judgments, directly determines the ceiling of your aligned model.

Converting annotations from any of the three tools into the chosen/rejected format requires a mapping step that handles the preference scale (5-point Likert scales must be collapsed into binary preferences), filters out ties and low-confidence annotations, and validates inter-annotator agreement. Code Fragment 29.12.4 shows this pipeline for Argilla and Label Studio exports.

# Code Fragment 29.12.4: Converting annotation exports to RLHF training format

import json

from collections import Counter

from datasets import Dataset

def argilla_to_preference_pairs(argilla_records: list[dict]) -> list[dict]:

"""Convert Argilla annotation exports to chosen/rejected pairs.

Filters out ties and low-agreement examples.

"""

preference_pairs = []

for record in argilla_records:

instruction = record["fields"]["instruction"]

response_a = record["fields"]["response_a"]

response_b = record["fields"]["response_b"]

# Collect all annotator responses for this record

responses = record.get("responses", [])

if not responses:

continue

# Aggregate preferences across annotators

pref_counts = Counter()

for resp in responses:

pref = resp["values"]["preference"]["value"]

pref_counts[pref] += 1

# Map 5-point scale to binary preference

a_votes = pref_counts.get("a_much_better", 0) + pref_counts.get("a_slightly_better", 0)

b_votes = pref_counts.get("b_much_better", 0) + pref_counts.get("b_slightly_better", 0)

tie_votes = pref_counts.get("tie", 0)

total = a_votes + b_votes + tie_votes

# Skip ties and low-agreement examples

if total == 0 or tie_votes > (a_votes + b_votes):

continue

# Require at least 60% agreement for binary preference

majority = max(a_votes, b_votes)

if majority / total < 0.6:

continue

if a_votes > b_votes:

chosen, rejected = response_a, response_b

else:

chosen, rejected = response_b, response_a

preference_pairs.append({

"prompt": instruction,

"chosen": chosen,

"rejected": rejected,

"agreement_ratio": majority / total,

"num_annotators": total,

})

return preference_pairs

def format_for_dpo_training(pairs: list[dict]) -> Dataset:

"""Format preference pairs for DPO/RLHF training with TRL."""

formatted = []

for pair in pairs:

formatted.append({

"prompt": pair["prompt"],

"chosen": [

{"role": "user", "content": pair["prompt"]},

{"role": "assistant", "content": pair["chosen"]},

],

"rejected": [

{"role": "user", "content": pair["prompt"]},

{"role": "assistant", "content": pair["rejected"]},

],

})

dataset = Dataset.from_list(formatted)

return dataset

# Example usage

raw_export = json.loads(open("argilla_export.json").read())

pairs = argilla_to_preference_pairs(raw_export)

print(f"Extracted {len(pairs)} preference pairs from {len(raw_export)} records")

print(f"Mean agreement: {sum(p['agreement_ratio'] for p in pairs) / len(pairs):.2f}")

dpo_dataset = format_for_dpo_training(pairs)

dpo_dataset.push_to_hub("my-org/preference-data-v3")

Do not train on low-agreement preference pairs. When annotators disagree about which response is better, the pair contains ambiguous signal. Training a reward model on ambiguous pairs teaches it to assign similar rewards to qualitatively different responses, which flattens the reward landscape and degrades RLHF performance. A common threshold is to require at least 66% agreement (two out of three annotators) for binary preference and at least 75% agreement for a pair to be considered "high confidence." Filter aggressively: a smaller, high-agreement dataset consistently outperforms a larger, noisy one.

5. Inter-Annotator Agreement and Quality Metrics

Measuring the consistency of human annotations is not optional; it is the foundation on which all downstream quality depends. If annotators do not agree with each other, the preference signal is noise, and any model trained on that signal will learn noise. The standard metrics for inter-annotator agreement are Cohen's kappa (for two annotators) and Fleiss' kappa (for multiple annotators). For ordinal preference scales, Krippendorff's alpha with ordinal weighting is more appropriate because it accounts for the distance between disagreements (confusing "much better" with "slightly better" is less severe than confusing "much better" with "much worse").

# Code Fragment 29.12.3: Computing inter-annotator agreement metrics

import numpy as np

from sklearn.metrics import cohen_kappa_score

def compute_pairwise_kappa(annotations: dict[str, list[int]]) -> dict:

"""Compute pairwise Cohen's kappa for all annotator pairs.

Args:

annotations: mapping of annotator_id to list of labels

(one label per item, in the same order)

Returns:

Dictionary with pairwise kappa scores and overall statistics.

"""

annotator_ids = list(annotations.keys())

n_annotators = len(annotator_ids)

kappa_scores = {}

for i in range(n_annotators):

for j in range(i + 1, n_annotators):

id_i, id_j = annotator_ids[i], annotator_ids[j]

labels_i = annotations[id_i]

labels_j = annotations[id_j]

# Filter to items where both annotators provided labels

paired = [

(a, b) for a, b in zip(labels_i, labels_j)

if a is not None and b is not None

]

if len(paired) < 10:

continue

a_labels, b_labels = zip(*paired)

kappa = cohen_kappa_score(a_labels, b_labels, weights="linear")

kappa_scores[f"{id_i}_vs_{id_j}"] = {

"kappa": round(kappa, 3),

"n_items": len(paired),

"agreement_pct": round(

sum(1 for a, b in paired if a == b) / len(paired) * 100, 1

),

}

# Compute summary statistics

all_kappas = [v["kappa"] for v in kappa_scores.values()]

summary = {

"pairwise_scores": kappa_scores,

"mean_kappa": round(np.mean(all_kappas), 3) if all_kappas else None,

"min_kappa": round(np.min(all_kappas), 3) if all_kappas else None,

"max_kappa": round(np.max(all_kappas), 3) if all_kappas else None,

"interpretation": interpret_kappa(np.mean(all_kappas)) if all_kappas else "insufficient data",

}

return summary

def interpret_kappa(kappa: float) -> str:

"""Interpret kappa using Landis and Koch (1977) scale."""

if kappa < 0.0:

return "poor (less than chance)"

elif kappa < 0.20:

return "slight agreement"

elif kappa < 0.40:

return "fair agreement"

elif kappa < 0.60:

return "moderate agreement"

elif kappa < 0.80:

return "substantial agreement"

else:

return "almost perfect agreement"

# Example: three annotators labeling 100 preference comparisons

# Labels: 0 = A much better, 1 = A slightly better, 2 = tie,

# 3 = B slightly better, 4 = B much better

np.random.seed(42)

annotations = {

"annotator_1": list(np.random.choice([0, 1, 2, 3, 4], size=100, p=[0.15, 0.25, 0.15, 0.30, 0.15])),

"annotator_2": list(np.random.choice([0, 1, 2, 3, 4], size=100, p=[0.15, 0.25, 0.15, 0.30, 0.15])),

"annotator_3": list(np.random.choice([0, 1, 2, 3, 4], size=100, p=[0.15, 0.25, 0.15, 0.30, 0.15])),

}

results = compute_pairwise_kappa(annotations)

print(f"Mean kappa: {results['mean_kappa']}")

print(f"Interpretation: {results['interpretation']}")

for pair, score in results["pairwise_scores"].items():

print(f" {pair}: kappa={score['kappa']}, agreement={score['agreement_pct']}%")

Who: A data quality lead at an AI safety startup building a reward model for their chat assistant.

Situation: The team hired 12 annotators to label 10,000 preference pairs (selecting which of two model responses was better) for RLHF training.

Problem: After the first 500 pairs, Cohen's kappa was only 0.31 (fair agreement), well below the 0.6 threshold needed for high-quality training data. Annotators disagreed most on responses that were "helpful but verbose" versus "concise but incomplete," because the guidelines gave no priority between these qualities.

Decision: The lead decomposed the single holistic preference question into four sub-criteria (helpfulness, harmlessness, honesty, conciseness), each scored independently. Two calibration rounds with the full team resolved the most common disagreement patterns, and explicit tie-breaking rules were added to the guidelines.

Result: After the revised guidelines and two calibration rounds, kappa rose from 0.31 to 0.67 (substantial agreement). The resulting reward model trained on the higher-quality data showed a 9-point improvement on the team's internal preference benchmark compared to the model trained on the initial low-agreement labels.

Lesson: Decomposing preference judgments into specific sub-criteria and running structured calibration rounds is essential for reaching the kappa thresholds that produce reliable RLHF training data. A single holistic "which is better?" question is almost always underspecified.

6. Annotation Workflow Design

The quality of preference data depends as much on the workflow design as on the tooling. A well-designed annotation workflow includes five stages: task decomposition, guideline creation, calibration rounds, production annotation, and quality monitoring. Skipping any stage introduces systematic biases that are difficult to detect after the fact.

Task decomposition breaks a holistic preference judgment ("which response is better?") into specific, evaluable dimensions. Common dimensions for LLM evaluation include: factual accuracy, instruction following, coherence, safety, verbosity (is the response appropriately concise?), and tone. Annotators rate each dimension independently, and the final preference is derived from a weighted combination. This decomposition improves agreement because each sub-question has a narrower scope and clearer criteria.

Calibration rounds are short annotation sessions (typically 20 to 50 examples) where all annotators label the same items, then discuss disagreements as a group. The goal is to surface implicit assumptions, resolve guideline ambiguities, and align annotators' mental models before production annotation begins. Best practice is to run at least two calibration rounds: one after the initial guidelines are written, and a second after the guidelines are revised based on the first round's disagreements.

Guideline iteration is a research process, not a documentation task. The first draft of annotation guidelines is always underspecified. Real improvement comes from analyzing disagreement patterns, identifying the ambiguous cases that cause divergent judgments, and writing explicit resolution rules for those cases. For example, if annotators disagree on whether a longer but more detailed response is "better" than a shorter but accurate one, the guidelines must specify the priority: "Prefer concise responses unless the additional detail directly addresses the user's question." Expect to revise guidelines three to five times before they stabilize.

Handling disagreement in production annotation requires a defined policy. Three common strategies are: (1) majority vote, where the label with the most annotator support wins; (2) adjudication, where a senior annotator reviews disagreements and makes a final decision; and (3) exclusion, where disagreed items are removed from the training set. For RLHF preference data, a combination of majority vote (for mild disagreements) and exclusion (for strong disagreements where annotators are split roughly evenly) tends to produce the most reliable training signal.

7. Scale and Automation: LLM-as-Judge Pre-labeling

At production scale, fully human annotation is often too slow and too expensive. The emerging best practice is a tiered approach that uses automated methods to handle easy cases and reserves human attention for hard ones. The three tiers are: (1) LLM-as-judge for pre-labeling and easy-case filtering, (2) active learning to prioritize the most informative examples for human review, and (3) full human annotation only for the examples where automated methods are uncertain or disagree.

LLM-as-judge pre-labeling uses a strong model (such as GPT-4o or Claude) to generate initial preference judgments. These judgments serve the same role as pre-annotations in Label Studio: they provide a starting point that human annotators can confirm or override. The key risk is automation bias, where annotators defer to the model's judgment even when it is wrong. Mitigating this requires randomizing whether the pre-label is shown, periodically inserting "trap" examples where the pre-label is intentionally incorrect, and monitoring the override rate per annotator.

Active learning prioritization selects which examples to send for human annotation based on model uncertainty. For preference annotation, uncertainty can be estimated by: (a) the margin between the reward model's scores for the two responses, (b) the disagreement between multiple LLM judges, or (c) the entropy of the model's preference distribution. Examples near the decision boundary (small margin, high disagreement, high entropy) are the most informative for training and should be prioritized for human review.

Budget your annotation spend on a cost-per-quality curve. Track the marginal improvement in reward model accuracy as a function of annotation budget. In practice, the curve follows diminishing returns: the first 1,000 high-quality annotations produce a large improvement, the next 1,000 produce a moderate improvement, and beyond 5,000 to 10,000 annotations the gains often plateau. By plotting this curve during an initial pilot phase, you can set a budget that maximizes quality per dollar. Combining 2,000 human annotations with 20,000 LLM-as-judge labels (calibrated against the human annotations) often matches the quality of 8,000 to 10,000 purely human annotations at a fraction of the cost.

| Feature | Label Studio | Argilla | LangSmith |

|---|---|---|---|

| License | Apache 2.0 | Apache 2.0 | Commercial (free tier) |

| Primary focus | General annotation | LLM dataset curation | Tracing and evaluation |

| Self-hosted | Yes | Yes (also HF Spaces) | Cloud only |

| Pre-annotation | ML backend API | Suggestions API | Auto-evaluators |

| HF Hub integration | Via export | Native push/pull | Via export |

| Production tracing | No | No | Yes (core feature) |

| Active learning | Built-in | Via SDK | Via annotation queues |

| Multi-annotator | Yes | Yes (with agreement) | Yes (annotation queues) |

| Best for | Custom labeling UIs | RLHF data pipelines | Production eval loops |

Constitutional AI and automated feedback at scale. The trajectory of human feedback tooling points toward systems where human annotation is used primarily to calibrate and audit automated feedback systems rather than to produce training data directly. Anthropic's Constitutional AI (CAI) replaces most human preference judgments with model self-critique guided by a set of principles, using human feedback only to validate the principles themselves.

Similarly, OpenAI's "rule-based reward models" encode human preferences as explicit rules that can be applied automatically. The open question is how far this automation can go: current evidence suggests that human oversight remains essential for novel domains, edge cases, and value-laden judgments where principles conflict.

The tools covered in this section will likely evolve to support this hybrid paradigm, with annotation interfaces designed for principle authoring and audit review rather than example-by-example labeling.

- Label Studio provides flexible, open-source annotation interfaces for rating, ranking, and classifying LLM outputs.

- Argilla specializes in dataset curation for LLMs, with built-in support for preference datasets and direct integration with the Hugging Face ecosystem.

- LangSmith combines production tracing with human-in-the-loop evaluation, enabling teams to annotate real production traffic.

- Inter-annotator agreement (measured by Cohen's kappa or Krippendorff's alpha) is essential for validating that evaluation labels are meaningful and reproducible.

- RLHF pipeline integration connects human feedback collection directly to preference dataset construction, closing the loop between evaluation and model improvement.

Exercises

Exercise 29.12.1

Set up a Label Studio project with the preference ranking template from Code Fragment 29.12.2. Import 10 example tasks with response pairs from two different models (you can generate these using any two LLMs with the same prompts). Annotate all 10 tasks and export the results in JSON format. Examine the exported JSON to understand the annotation schema.

Exercise 29.12.2

Using Argilla, create a feedback dataset with at least 50 instruction/response pairs. Configure three annotators (these can be three separate browser sessions or team members) and have each annotator label all 50 records. Compute the pairwise Cohen's kappa using Code Fragment 29.12.3. What is your agreement level? Identify the three most disagreed-upon examples and write explicit guideline additions that would resolve the ambiguity.

Exercise 29.12.3

Build an end-to-end RLHF data pipeline: (1) generate 200 response pairs using two models of different sizes, (2) use an LLM-as-judge to pre-label all pairs, (3) have a human annotator review a 50-example subset, (4) measure agreement between the LLM judge and the human, (5) filter the full 200-pair dataset using the agreement threshold from step 4, and (6) format the filtered data for DPO training using the format_for_dpo_training function from Code Fragment 29.12.5. Report the filtering rate and the estimated label accuracy of the final dataset.

Exercise 29.12.4

Implement an active learning loop for preference annotation. Start with a reward model trained on 100 annotated pairs. Use the reward model's score margin (difference between scores for the two responses) to rank the remaining unlabeled pairs by informativeness. Annotate the 20 pairs with the smallest margin, retrain the reward model, and repeat for three iterations. Plot the reward model's accuracy on a held-out test set as a function of total annotations. Compare this to a baseline where 60 pairs are selected at random. Does active learning produce a better reward model with the same annotation budget?

Bibliography

Tkachenko, M., Malyuk, M., Holmanyuk, A., & Liubimov, N. (2022). "Label Studio: Data Labeling Software." GitHub: HumanSignal/label-studio

Argilla Team. (2024). "Argilla: Open-Source Data Curation Platform for LLMs." GitHub: argilla-io/argilla

LangChain, Inc. (2024). "LangSmith: Developer Platform for LLM Applications." docs.smith.langchain.com

Ouyang, L., Wu, J., Jiang, X., et al. (2022). "Training Language Models to Follow Instructions with Human Feedback." NeurIPS 2022. arXiv:2203.02155

Bai, Y., Kadavath, S., Kundu, S., et al. (2022). "Constitutional AI: Harmlessness from AI Feedback." arXiv:2212.08073

Rafailov, R., Sharma, A., Mitchell, E., et al. (2023). "Direct Preference Optimization: Your Language Model Is Secretly a Reward Model." NeurIPS 2023. arXiv:2305.18290

Landis, J. R. & Koch, G. G. (1977). "The Measurement of Observer Agreement for Categorical Data." Biometrics, 33(1), 159-174.

Krippendorff, K. (2004). "Reliability in Content Analysis: Some Common Misconceptions and Recommendations." Human Communication Research, 30(3), 411-433.

Ratner, A., Bach, S., Ehrenberg, H., et al. (2017). "Snorkel: Rapid Training Data Creation with Weak Supervision." VLDB 2018. arXiv:1711.10160

Zheng, L., Chiang, W., Sheng, Y., et al. (2023). "Judging LLM-as-a-Judge with MT-Bench and Chatbot Arena." NeurIPS 2023. arXiv:2306.05685

What Comes Next

In this section we covered label studio: open-source annotation platform, argilla: dataset curation for llms, and related topics. In Section 29.13: Research Methodology for LLM Papers, we continue starting with experiment design for llm research.