You cannot improve what you cannot observe. And in distributed systems, you cannot observe what you have not instrumented.

Charity Majors, co-founder of Honeycomb

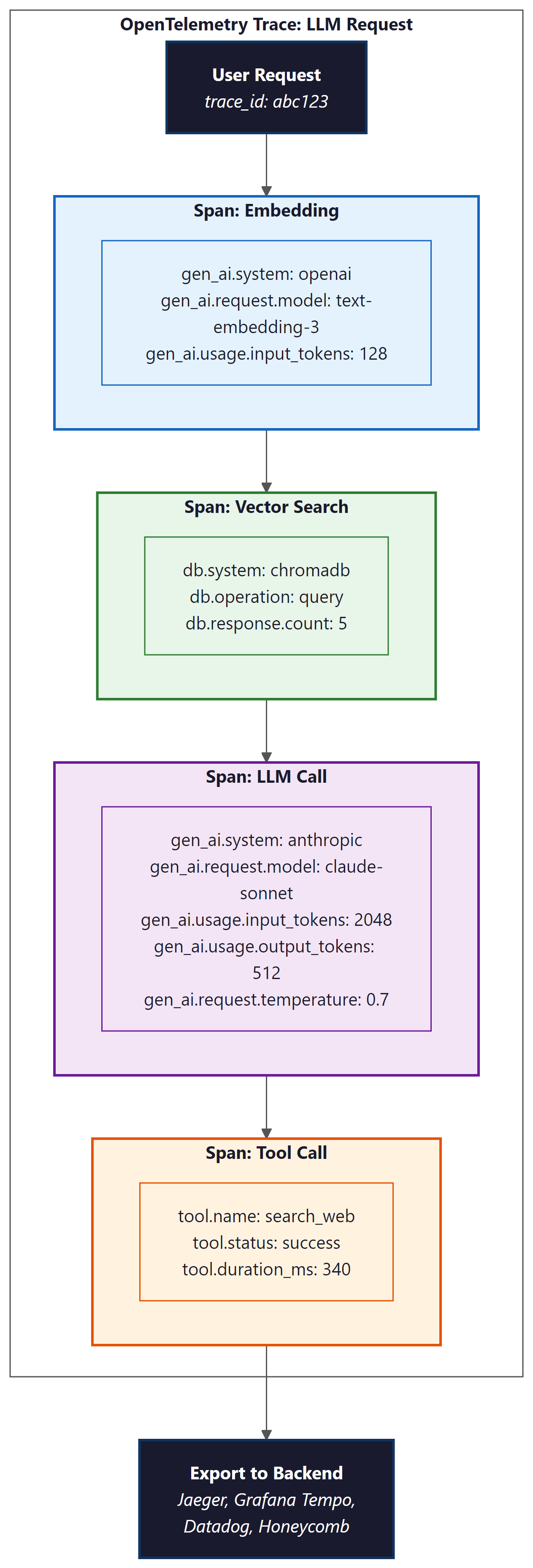

OpenTelemetry provides the standardized observability backbone that makes LLM applications debuggable in production. Without structured tracing, a single user request that touches an embedding model, a vector database, multiple LLM calls, and several tool invocations becomes a black box when something goes wrong. The GenAI Semantic Conventions give every team a common vocabulary for LLM telemetry, enabling unified dashboards across providers and frameworks. This section shows how to instrument LLM applications with OpenTelemetry, from auto-instrumentation libraries to custom span attributes, building on the observability foundations from Section 30.1.

Prerequisites

This section builds on the observability foundations from Section 30.1 and the monitoring patterns in Section 30.2. Familiarity with LLM API calls and tool use patterns is recommended.

1. Why OpenTelemetry for LLM Systems

OpenTelemetry (OTel) has become the industry standard for distributed tracing, metrics, and logging across microservices. LLM applications introduce unique observability challenges that generic application monitoring tools cannot address. A single user request may traverse an embedding model, a vector database, a retrieval pipeline, one or more LLM calls, and several tool invocations. Each step contributes latency, cost, and potential failure points. Without structured tracing that captures LLM-specific metadata (token counts, model identifiers, prompt templates, temperature settings), debugging production issues becomes guesswork.

The OpenTelemetry Semantic Conventions for Generative AI (formerly LLM Semantic Conventions)

define a standardized set of span attributes for model calls. These conventions ensure that traces

from different LLM providers, frameworks, and custom code share a common vocabulary. Attributes

like gen_ai.system, gen_ai.request.model,

gen_ai.usage.input_tokens, and gen_ai.usage.output_tokens allow you

to build unified dashboards regardless of whether you call OpenAI, Anthropic, or a self-hosted

model. The deployment patterns in Section 31.1 benefit directly from this standardized telemetry.

The key benefit of OTel over proprietary tracing solutions (LangSmith, Langfuse) is vendor neutrality. OTel traces can be exported to any compatible backend: Jaeger, Grafana Tempo, Datadog, Honeycomb, or your own collector. This avoids lock-in and allows teams to integrate LLM observability into their existing monitoring stack rather than maintaining a separate system. That said, proprietary tools covered in Section 30.1 often provide richer LLM-specific UIs and are easier to set up for teams without existing observability infrastructure.

OpenTelemetry is not a replacement for LLM-specific observability platforms like LangSmith or Langfuse. It is the transport and data model layer that feeds into those platforms (or any other backend). Many LLM observability tools now accept OTel data natively, meaning you instrument once with OTel and send traces to multiple destinations. The choice is not "OTel or LangSmith" but rather "OTel as the instrumentation standard, with LangSmith (or Langfuse, or Datadog) as the visualization and analysis layer."

The first version of the OpenTelemetry GenAI semantic conventions was drafted during a hackathon where engineers from six different LLM observability startups realized they had each invented their own incompatible span attribute names for "number of tokens used." The field name gen_ai.usage.input_tokens exists because seven companies spent a weekend arguing about whether to call it "prompt_tokens," "input_tokens," or "request_tokens."

2. Instrumenting LLM API Calls

The foundation of LLM observability is instrumenting every model call with a span that captures

the request parameters, response metadata, and timing information. The OpenTelemetry Python SDK

provides the primitives; the opentelemetry-instrumentation-openai and similar

auto-instrumentation packages handle the most common providers automatically.

from opentelemetry import trace

from opentelemetry.sdk.trace import TracerProvider

from opentelemetry.sdk.trace.export import BatchSpanProcessor

from opentelemetry.exporter.otlp.proto.grpc.trace_exporter import OTLPSpanExporter

from opentelemetry.sdk.resources import Resource

# Initialize the tracer with service metadata

resource = Resource.create({

"service.name": "my-llm-app",

"service.version": "1.2.0",

"deployment.environment": "production",

})

provider = TracerProvider(resource=resource)

processor = BatchSpanProcessor(

OTLPSpanExporter(endpoint="http://otel-collector:4317")

)

provider.add_span_processor(processor)

trace.set_tracer_provider(provider)

tracer = trace.get_tracer("llm-app.tracer")

With the tracer initialized, you can instrument individual LLM calls using the GenAI semantic conventions. Each span should capture the model name, provider, token usage, and any relevant request parameters. This manual instrumentation gives you full control over what is captured, which is important for sensitive applications where you may need to redact prompt content.

import openai

from opentelemetry import trace

from opentelemetry.semconv.attributes import gen_ai_attributes as GenAI

tracer = trace.get_tracer("llm-app.tracer")

async def traced_chat_completion(messages, model="gpt-4o", **kwargs):

"""Wrap an OpenAI chat completion with OTel tracing."""

with tracer.start_as_current_span(

"gen_ai.chat",

attributes={

GenAI.GEN_AI_SYSTEM: "openai",

GenAI.GEN_AI_REQUEST_MODEL: model,

GenAI.GEN_AI_REQUEST_TEMPERATURE: kwargs.get("temperature", 1.0),

GenAI.GEN_AI_REQUEST_MAX_TOKENS: kwargs.get("max_tokens", 4096),

}

) as span:

try:

client = openai.AsyncOpenAI()

response = await client.chat.completions.create(

model=model, messages=messages, **kwargs

)

# Record response metadata

usage = response.usage

span.set_attribute(GenAI.GEN_AI_RESPONSE_MODEL, response.model)

span.set_attribute(GenAI.GEN_AI_USAGE_INPUT_TOKENS, usage.prompt_tokens)

span.set_attribute(GenAI.GEN_AI_USAGE_OUTPUT_TOKENS, usage.completion_tokens)

span.set_attribute("gen_ai.response.finish_reason",

response.choices[0].finish_reason)

# Record cost estimate (custom attribute)

cost = estimate_cost(model, usage.prompt_tokens, usage.completion_tokens)

span.set_attribute("gen_ai.usage.cost_usd", cost)

return response

except Exception as e:

span.set_status(trace.StatusCode.ERROR, str(e))

span.record_exception(e)

raise

The same result in 3 lines with OpenLLMetry (auto-instruments all LLM calls):

# pip install traceloop-sdk

from traceloop.sdk import Traceloop

Traceloop.init(app_name="my-llm-app")

# All OpenAI, Anthropic, and LangChain calls are now automatically

# traced with GenAI semantic conventions. No manual spans needed.

3. Trace Propagation Through Agent Chains

Single LLM calls are straightforward to trace. The real complexity emerges in agentic systems where a single user request triggers a chain of LLM calls, tool invocations, and sub-agent delegations. Consider a RAG pipeline: the user's query is first embedded, then used for vector search, then the retrieved documents are assembled into a prompt, and finally the LLM generates a response. Each step should be a child span under a single parent trace, preserving the causal chain.

For multi-agent systems,

trace context must propagate across agent boundaries. When an orchestrator agent delegates a

task to a specialist agent, the specialist's spans should appear as children of the orchestrator's

delegation span. If agents communicate via message queues or HTTP, the OTel context propagation

headers (traceparent, tracestate) must be injected into the message

metadata and extracted on the receiving side.

from opentelemetry import trace, context

from opentelemetry.context.contextvars_context import ContextVarsRuntimeContext

tracer = trace.get_tracer("llm-app.agent-chain")

async def rag_pipeline(query: str):

"""Full RAG pipeline with hierarchical tracing."""

with tracer.start_as_current_span("rag.pipeline") as pipeline_span:

pipeline_span.set_attribute("rag.query", query)

# Step 1: Embed the query

with tracer.start_as_current_span("rag.embed_query") as embed_span:

embedding = await embed_text(query)

embed_span.set_attribute("rag.embedding_model", "text-embedding-3-small")

embed_span.set_attribute("rag.embedding_dim", len(embedding))

# Step 2: Vector search

with tracer.start_as_current_span("rag.vector_search") as search_span:

results = await vector_store.search(embedding, top_k=10)

search_span.set_attribute("rag.results_count", len(results))

search_span.set_attribute("rag.top_score", results[0].score)

# Step 3: Rerank

with tracer.start_as_current_span("rag.rerank") as rerank_span:

reranked = await reranker.rerank(query, results, top_k=5)

rerank_span.set_attribute("rag.reranked_count", len(reranked))

# Step 4: Generate response (this creates its own child span)

context_text = "\n\n".join(r.text for r in reranked)

response = await traced_chat_completion(

messages=[

{"role": "system", "content": f"Context:\n{context_text}"},

{"role": "user", "content": query}

],

model="gpt-4o"

)

pipeline_span.set_attribute("rag.total_tokens",

response.usage.prompt_tokens + response.usage.completion_tokens)

return response.choices[0].message.content

rag.pipeline span. The traced_chat_completion function from Code Fragment 30.5.8 automatically creates a nested child span for the LLM call. This structure allows you to see exactly where time is spent in the pipeline.Who: A platform engineer at a legal technology company operating a tool-using agent that researched case law and drafted legal summaries.

Situation: The agent used five tools (case search, statute lookup, citation validator, summarizer, and document formatter) and processed roughly 2,000 queries per day.

Problem: Users reported that some queries took over 45 seconds, but the team had no visibility into which tool calls were responsible. Aggregate latency metrics showed only the total request duration, not the per-tool breakdown.

Decision: The engineer instrumented each iteration of the agent loop as a child span under the agent's main OTel span, recording tool name, arguments, execution time, and result size on each tool span.

Result: Tracing revealed that the citation validator was responsible for 60% of total latency on slow queries because it made synchronous HTTP calls to an external API with no timeout. Adding a 3-second timeout and a local cache for frequently cited cases reduced P99 agent latency from 45 seconds to 12 seconds. Token cost attribution also showed that tool result parsing consumed 40% of the agent's total token budget, prompting the team to truncate verbose tool outputs. The cost control patterns in Section 26.3 rely on exactly this granularity of data.

Lesson: Without per-tool-call tracing, agent performance debugging is guesswork. Structured spans for each agent loop iteration make it trivial to identify which tool is the bottleneck and where tokens are being consumed.

4. Token Tracking and Cost Attribution

Token usage is the primary cost driver for LLM applications. OTel tracing provides the

infrastructure to track tokens at every level of granularity: per call, per pipeline step,

per user session, per feature, and per tenant. By recording gen_ai.usage.input_tokens

and gen_ai.usage.output_tokens on every LLM span, you can aggregate costs across

any dimension using your tracing backend's query language.

Cost attribution becomes critical in multi-tenant applications where different customers or features consume different amounts of LLM resources. By adding custom span attributes for tenant ID, feature name, and user tier, you can build per-tenant cost dashboards. This data feeds directly into billing systems and helps identify features or users that generate disproportionate costs. The cost control strategies in Section 26.3 depend on this level of cost visibility.

from opentelemetry import trace

from dataclasses import dataclass

from typing import Optional

@dataclass

class CostConfig:

"""Per-model pricing (USD per 1K tokens)."""

input_cost_per_1k: float

output_cost_per_1k: float

PRICING = {

"gpt-4o": CostConfig(0.0025, 0.01),

"gpt-4o-mini": CostConfig(0.00015, 0.0006),

"claude-sonnet-4-20250514": CostConfig(0.003, 0.015),

"claude-haiku-4-20250414": CostConfig(0.0008, 0.004),

}

class TokenTracker:

"""Track token usage and costs across the application."""

def __init__(self):

self.tracer = trace.get_tracer("llm-app.cost-tracker")

def record_usage(

self,

model: str,

input_tokens: int,

output_tokens: int,

tenant_id: Optional[str] = None,

feature: Optional[str] = None,

):

"""Record token usage on the current span with cost attribution."""

span = trace.get_current_span()

pricing = PRICING.get(model, CostConfig(0.01, 0.03))

input_cost = (input_tokens / 1000) * pricing.input_cost_per_1k

output_cost = (output_tokens / 1000) * pricing.output_cost_per_1k

total_cost = input_cost + output_cost

span.set_attribute("gen_ai.usage.input_tokens", input_tokens)

span.set_attribute("gen_ai.usage.output_tokens", output_tokens)

span.set_attribute("gen_ai.usage.cost_usd", round(total_cost, 6))

if tenant_id:

span.set_attribute("app.tenant_id", tenant_id)

if feature:

span.set_attribute("app.feature", feature)

# Also emit as a metric for real-time dashboards

from opentelemetry import metrics

meter = metrics.get_meter("llm-app.cost-meter")

token_counter = meter.create_counter(

"gen_ai.tokens.total",

description="Total tokens consumed",

)

cost_counter = meter.create_counter(

"gen_ai.cost.usd",

description="Estimated cost in USD",

)

token_counter.add(input_tokens + output_tokens, {

"model": model, "token_type": "total",

"tenant_id": tenant_id or "unknown",

})

cost_counter.add(total_cost, {

"model": model,

"tenant_id": tenant_id or "unknown",

})

TokenTracker records token counts and estimated costs both as span attributes (for per-request analysis) and as OTel metrics (for real-time dashboards and alerting). Custom attributes for tenant and feature enable cost allocation across business dimensions.5. Auto-Instrumentation with OpenLLMetry

Manual instrumentation provides maximum control but requires modifying every LLM call site. For faster adoption, auto-instrumentation libraries can patch LLM client libraries at import time to automatically generate spans. OpenLLMetry (by Traceloop) is the most mature auto-instrumentation package for LLM applications. It supports OpenAI, Anthropic, Cohere, LangChain, LlamaIndex, ChromaDB, Pinecone, and many other libraries.

# Auto-instrument all supported LLM libraries with one call

from traceloop.sdk import Traceloop

Traceloop.init(

app_name="my-llm-app",

# Export to any OTel-compatible backend

exporter_endpoint="http://otel-collector:4317",

# Control what gets captured

tracing_enabled=True,

metrics_enabled=True,

# Redact prompt/completion content for privacy

should_enrich_metrics=True,

)

# Now all OpenAI, Anthropic, LangChain calls are automatically traced

import openai

client = openai.OpenAI()

# This call is automatically instrumented with GenAI semantic conventions

response = client.chat.completions.create(

model="gpt-4o-mini",

messages=[{"role": "user", "content": "Explain observability in one sentence."}]

)

# A span is automatically created with model, tokens, latency, and cost

should_enrich_metrics flag controls whether prompt and completion content is included in traces.Auto-instrumentation is ideal for getting started quickly, but it captures everything

indiscriminately. In production, you typically want a hybrid approach: auto-instrumentation

for baseline coverage, plus manual spans for business-critical paths where you need custom

attributes (tenant ID, feature flags, A/B test variants). The manual @workflow

and @task decorators from Traceloop can annotate specific functions with

semantic meaning.

Recording full prompt and completion content in traces creates significant privacy and compliance risks. User messages may contain personal information, health data, or other sensitive content subject to GDPR, HIPAA, or similar regulations. Always configure content redaction in production. Most auto-instrumentation libraries support content filtering; use it. Store full prompt/completion logs only in systems with appropriate access controls and retention policies. The privacy and compliance topics in Chapter 32 apply directly here.

6. Building OTel Dashboards for LLM Operations

Raw traces are useful for debugging individual requests, but operational excellence requires aggregated dashboards that show system health at a glance. The combination of OTel metrics and span-derived analytics enables dashboards that answer the questions every LLM operations team needs answered: What is the current P50/P95/P99 latency? How many tokens are we consuming per hour? What is our error rate by model and provider? Which features are the most expensive?

Grafana is the most common dashboard tool for OTel data. With Grafana Tempo for traces and Prometheus (or Mimir) for metrics, you can build unified dashboards that correlate latency spikes with token usage anomalies. The following example shows how to define custom OTel metrics that power these dashboards.

from opentelemetry import metrics

from opentelemetry.sdk.metrics import MeterProvider

from opentelemetry.sdk.metrics.export import PeriodicExportingMetricReader

from opentelemetry.exporter.otlp.proto.grpc.metric_exporter import OTLPMetricExporter

# Set up metrics export

metric_reader = PeriodicExportingMetricReader(

OTLPMetricExporter(endpoint="http://otel-collector:4317"),

export_interval_millis=10000, # Export every 10 seconds

)

meter_provider = MeterProvider(metric_readers=[metric_reader])

metrics.set_meter_provider(meter_provider)

meter = metrics.get_meter("llm-app.operations")

# Define operational metrics

llm_latency = meter.create_histogram(

"gen_ai.request.duration",

unit="s",

description="LLM request duration in seconds",

)

llm_tokens = meter.create_counter(

"gen_ai.tokens.consumed",

description="Total tokens consumed by model and type",

)

llm_errors = meter.create_counter(

"gen_ai.errors",

description="LLM API errors by type and model",

)

active_requests = meter.create_up_down_counter(

"gen_ai.requests.active",

description="Currently in-flight LLM requests",

)

# Usage in application code

import time

async def monitored_llm_call(model, messages, **kwargs):

"""LLM call with full metrics instrumentation."""

labels = {"model": model, "provider": "openai"}

active_requests.add(1, labels)

start = time.monotonic()

try:

response = await client.chat.completions.create(

model=model, messages=messages, **kwargs

)

duration = time.monotonic() - start

llm_latency.record(duration, labels)

llm_tokens.add(response.usage.prompt_tokens,

{**labels, "token_type": "input"})

llm_tokens.add(response.usage.completion_tokens,

{**labels, "token_type": "output"})

return response

except Exception as e:

llm_errors.add(1, {**labels, "error_type": type(e).__name__})

raise

finally:

active_requests.add(-1, labels)

A well-designed LLM operations dashboard typically includes four panels: (1) a latency panel showing P50, P95, and P99 latency by model, with alerting thresholds; (2) a token consumption panel showing input and output tokens per hour, broken down by model and feature; (3) an error rate panel showing errors by type (rate limit, timeout, server error) with trend lines; and (4) a cost panel showing estimated spend per hour and projected monthly cost. The drift monitoring from Section 30.2 adds a fifth dimension: quality metrics derived from automated evaluation scores.

- OpenTelemetry provides the standardized observability backbone for LLM applications, with GenAI Semantic Conventions defining a common vocabulary for LLM telemetry.

- Auto-instrumentation libraries (OpenLLMetry, Traceloop) add tracing to LLM calls with minimal code changes, capturing token counts, model IDs, and latency automatically.

- Trace propagation through agent chains connects multi-step LLM workflows into a single trace, making it possible to debug complex agent behaviors end to end.

- Token tracking and cost attribution use OTel span attributes to allocate LLM costs to specific features, teams, or customers.

- Custom OTel dashboards for LLM operations should track p50/p95/p99 latency, tokens per second, cost per request, and error rates by model and endpoint.

Exercises

Set up OpenTelemetry tracing for a simple LLM application that makes a single chat completion call. Export traces to the console using ConsoleSpanExporter. Verify that the span includes the model name, token counts, and latency.

Answer Sketch

Initialize a TracerProvider with a SimpleSpanProcessor(ConsoleSpanExporter()). Create a span with tracer.start_as_current_span("gen_ai.chat"), set attributes for model and tokens after the API call, and verify the output includes all expected fields. The console output will show the span as a JSON object with the attributes you set.

Instrument a RAG pipeline with nested spans for embedding, vector search, and generation. Use a trace visualization tool (Jaeger or the console exporter) to verify that the spans form a proper parent-child hierarchy.

Answer Sketch

Create a parent span rag.pipeline, then use tracer.start_as_current_span() for each sub-step inside the parent's with block. OTel automatically links child spans to the active parent via context propagation. In Jaeger, you should see a waterfall view with the pipeline span at the top and sub-steps nested beneath it.

Build a multi-tenant cost attribution system using OTel metrics. Create counters for token usage and cost, labeled by tenant ID and feature name. Set up a Grafana dashboard (or equivalent) that shows per-tenant cost breakdown and alerts when any tenant exceeds their monthly budget.

Answer Sketch

Use the TokenTracker pattern from Code Fragment 30.5.5. Add tenant and feature labels to all metric emissions. In Grafana, create a panel with PromQL: sum by (tenant_id)(rate(gen_ai_cost_usd_total[1h])) * 720 to project monthly costs. Set alert rules on the projected cost exceeding per-tenant thresholds.

Design a content redaction strategy for OTel traces in a healthcare chatbot application subject to HIPAA. Specify which span attributes should be captured, which should be redacted, and how to handle trace storage and retention.

Answer Sketch

Capture: model name, token counts, latency, error types, feature name. Redact: all prompt content, completion content, and user identifiers. Use an OTel Collector processor to strip sensitive attributes before export. Store traces in a HIPAA-compliant backend with encryption at rest and access logging. Set retention to the minimum required for operational debugging (7 to 30 days). Never include PHI in span attributes or events.

What Comes Next

In the next chapter, Chapter 31: Production Engineering & Operations, we move from observability to the deployment, scaling, and operational patterns that bring LLM applications to production. The OTel instrumentation you learned here becomes the foundation for monitoring those production systems.

Lab: End-to-End MLOps Pipeline with MLflow

Objective

Set up a complete experiment tracking workflow using MLflow. You will first build a manual logging harness (the "right tool" baseline for understanding what gets tracked), then use MLflow's autologging and model registry features. By the end, you will have multiple tracked runs that you can compare in the MLflow UI.

What You'll Practice

- Setting up MLflow tracking with a local backend

- Logging parameters, metrics, and artifacts to experiment runs

- Comparing runs across hyperparameter configurations

- Registering and versioning model artifacts in the MLflow Model Registry

Setup

Install MLflow and a lightweight model library for the experiment.

pip install mlflow scikit-learn pandasSteps

Step 1: Manual experiment logging (from scratch)

Before relying on MLflow, build a simple JSON-based logging harness to understand what experiment tracking actually records. This makes the value of a dedicated tracking server concrete.

import json

import time

from datetime import datetime

from pathlib import Path

class ManualTracker:

"""A minimal experiment tracker using JSON files."""

def __init__(self, experiment_dir="manual_experiments"):

self.dir = Path(experiment_dir)

self.dir.mkdir(exist_ok=True)

def log_run(self, params, metrics, artifacts=None):

run_id = f"run_{int(time.time())}"

record = {

"run_id": run_id,

"timestamp": datetime.now().isoformat(),

"params": params,

"metrics": metrics,

"artifacts": artifacts or [],

}

path = self.dir / f"{run_id}.json"

path.write_text(json.dumps(record, indent=2))

print(f"Logged run {run_id}: accuracy={metrics.get('accuracy', 'N/A')}")

return run_id

def compare_runs(self):

runs = []

for f in sorted(self.dir.glob("run_*.json")):

runs.append(json.loads(f.read_text()))

print(f"\n{'Run ID':<20} {'Accuracy':<10} {'Params'}")

print("-" * 60)

for r in runs:

acc = r["metrics"].get("accuracy", "N/A")

print(f"{r['run_id']:<20} {acc:<10.4f} {r['params']}")

return runs

# Quick test

tracker = ManualTracker()

tracker.log_run(

params={"model": "logistic_regression", "C": 1.0},

metrics={"accuracy": 0.85, "f1": 0.83},

)

tracker.log_run(

params={"model": "logistic_regression", "C": 0.1},

metrics={"accuracy": 0.82, "f1": 0.80},

)

tracker.compare_runs()Step 2: Set up MLflow and log experiments

Now switch to MLflow for proper experiment tracking with a UI, artifact storage, and run comparison.

import mlflow

from sklearn.datasets import load_iris

from sklearn.model_selection import train_test_split

from sklearn.linear_model import LogisticRegression

from sklearn.metrics import accuracy_score, f1_score

# Configure MLflow (local file-based tracking)

mlflow.set_tracking_uri("file:./mlruns")

mlflow.set_experiment("iris-classification")

# Load data

X, y = load_iris(return_X_y=True)

X_train, X_test, y_train, y_test = train_test_split(

X, y, test_size=0.2, random_state=42

)

# Run experiments with different hyperparameters

for C_value in [0.01, 0.1, 1.0, 10.0]:

with mlflow.start_run(run_name=f"logreg_C={C_value}"):

# Log parameters

mlflow.log_param("model_type", "LogisticRegression")

mlflow.log_param("C", C_value)

mlflow.log_param("solver", "lbfgs")

# Train

model = LogisticRegression(C=C_value, solver="lbfgs", max_iter=200)

model.fit(X_train, y_train)

# Evaluate

preds = model.predict(X_test)

acc = accuracy_score(y_test, preds)

f1 = f1_score(y_test, preds, average="weighted")

# Log metrics

mlflow.log_metric("accuracy", acc)

mlflow.log_metric("f1_weighted", f1)

# Log the model as an artifact

mlflow.sklearn.log_model(model, "model")

print(f"C={C_value:>5}: accuracy={acc:.4f}, f1={f1:.4f}")Step 3: Compare runs and identify the best model

Use the MLflow search API to query runs programmatically and find the best-performing configuration.

import pandas as pd

# Query all runs from the experiment

experiment = mlflow.get_experiment_by_name("iris-classification")

runs_df = mlflow.search_runs(

experiment_ids=[experiment.experiment_id],

order_by=["metrics.accuracy DESC"],

)

# Display comparison table

cols = ["run_id", "params.C", "metrics.accuracy", "metrics.f1_weighted"]

available = [c for c in cols if c in runs_df.columns]

print(runs_df[available].to_string(index=False))

# Identify best run

best_run = runs_df.iloc[0]

print(f"\nBest run: {best_run['run_id']}")

print(f" C = {best_run['params.C']}")

print(f" Accuracy = {best_run['metrics.accuracy']:.4f}")

print(f" F1 = {best_run['metrics.f1_weighted']:.4f}")Step 4: Register the best model

Promote the best model to the MLflow Model Registry, assigning it a version and stage label for deployment tracking.

# Register the best model

best_run_id = best_run["run_id"]

model_uri = f"runs:/{best_run_id}/model"

result = mlflow.register_model(model_uri, "iris-classifier")

print(f"Registered model: {result.name}, version: {result.version}")

# Transition to staging

from mlflow.tracking import MlflowClient

client = MlflowClient()

client.transition_model_version_stage(

name="iris-classifier",

version=result.version,

stage="Staging",

)

print(f"Model version {result.version} transitioned to Staging")

# Load and verify the registered model

loaded_model = mlflow.sklearn.load_model(

f"models:/iris-classifier/Staging"

)

verify_preds = loaded_model.predict(X_test)

verify_acc = accuracy_score(y_test, verify_preds)

print(f"Verified accuracy from registry: {verify_acc:.4f}")

print("\nTo view the UI, run: mlflow ui --port 5000")Extensions

- Add MLflow autologging (

mlflow.sklearn.autolog()) and compare the captured metrics with your manual logging. - Log a confusion matrix plot as an artifact using

mlflow.log_figure()and view it in the MLflow UI. - Set up a model promotion workflow: automatically transition a model from Staging to Production only if its accuracy exceeds a threshold.

References and Further Reading

OpenTelemetry Standards and Semantic Conventions

The official specification defining standardized span attributes for LLM calls, including token counts, model identifiers, and provider-specific metadata.

OpenTelemetry Authors (2024). "Traces: Distributed Tracing Concepts." OpenTelemetry Documentation.

Foundational documentation on OpenTelemetry's tracing model, explaining spans, contexts, and propagation that underpin all LLM instrumentation.

LLM Observability and Instrumentation

Explores evaluation and monitoring challenges for LLM outputs in production, providing context for why structured observability is essential.

An open-source library that auto-instruments popular LLM frameworks (LangChain, LlamaIndex, OpenAI SDK) with OpenTelemetry-compatible traces.

Production Tracing Backends and Visualization

Jaeger Authors (2024). "Jaeger: Open-Source Distributed Tracing." Jaeger Documentation.

Documentation for Jaeger, a widely used OpenTelemetry-compatible tracing backend for visualizing distributed traces from LLM pipelines.

Grafana Labs (2024). "Grafana Tempo: Distributed Tracing Backend." Grafana Documentation.

Tempo provides a scalable, cost-effective trace storage backend that integrates with Grafana dashboards for LLM observability visualization.